The earliest adopter of AI wasn’t your security team. It wasn’t even your vendor. It was the adversary.

"Adversarial poetry" is an intriguing phrase making the rounds in security research circles lately. If you’re not familiar, here’s the short version. Researchers in Italy found that frontier AI models could be tricked into ignoring their safety guardrails when malicious prompts were disguised as flowery poetic metaphors and verse.

It worked 62% of the time across 25 frontier language models. Let that sink in for a minute.

It’s a kind of finding that grabs your attention. It certainly did for us. And when we shared it on LinkedIn, it sparked a lot of discussion within our community.

But it’s also just one data point in a much bigger story.

What we’re seeing in the wild isn’t just attackers experimenting with AI models anymore. It’s attackers building their workflows around AI, because that’s where people are searching, troubleshooting, and working. These workflows move faster, blend into everyday behaviond make malicious actions feel normal to the victims.

That shift is something we’re already observing in practice. As John Hammond, Senior Principal Security Researcher at Huntress, noted in a recent _declassified session:

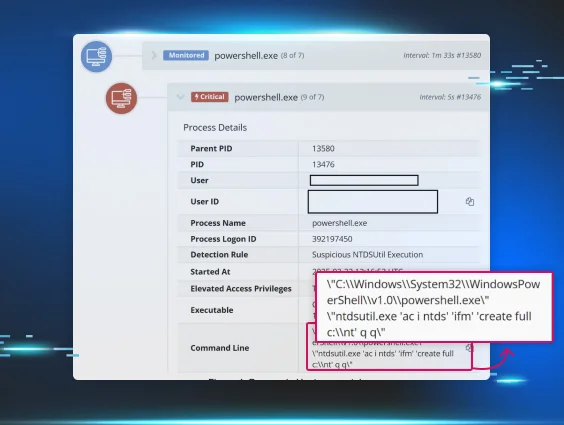

“I think threat actors and organized cybercriminals were kind of the earliest adopters and maybe first movers to leverage artificial intelligence… We now have threats that are moving sort of at machine speed. They're leveraging AI to pull off these attacks.”

At Huntress, we’ve seen this pattern play out across multiple recent incidents. Different techniques and different entry points, but the same underlying shift.

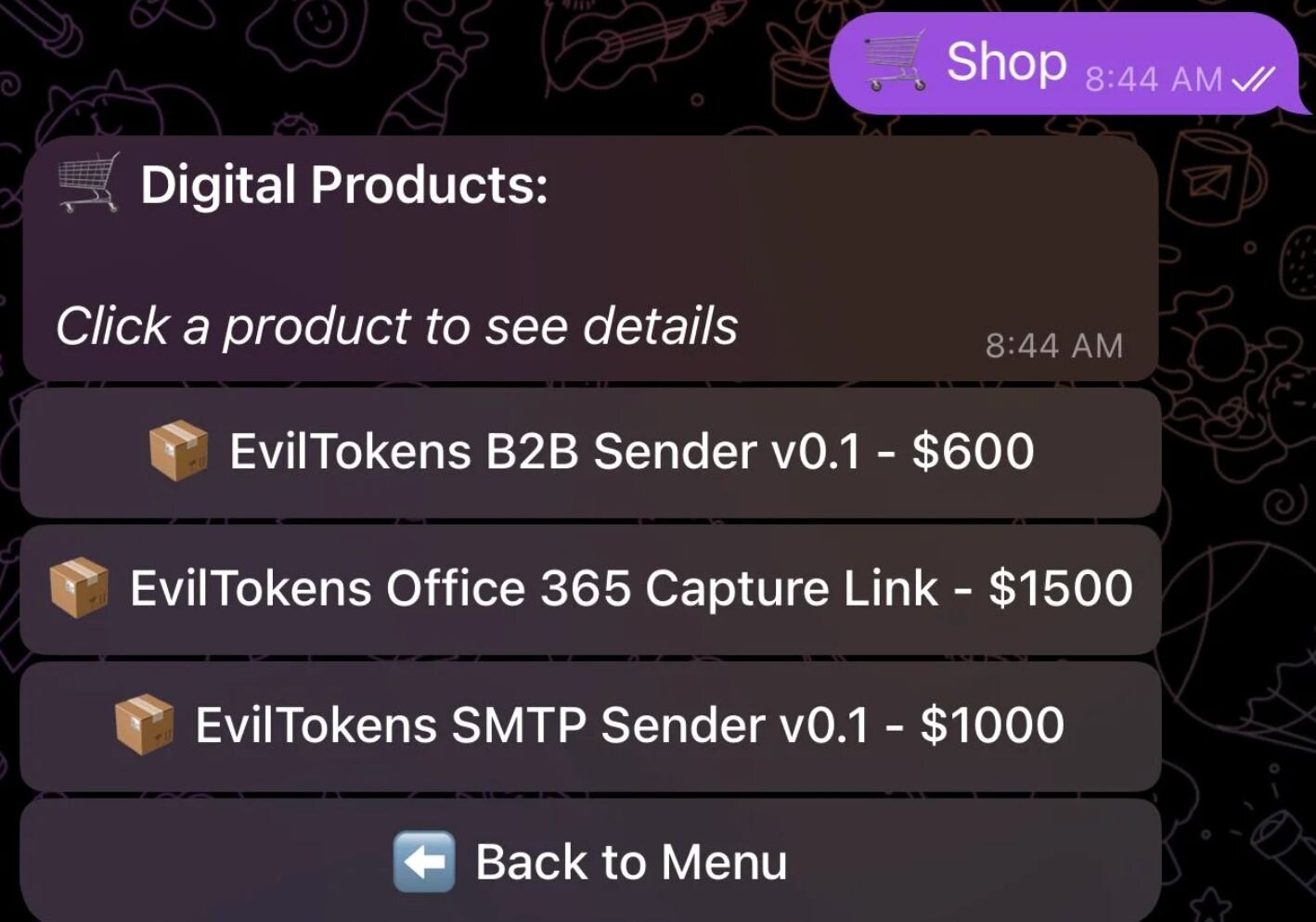

Old tricks, new targets: Fake AI tools in search

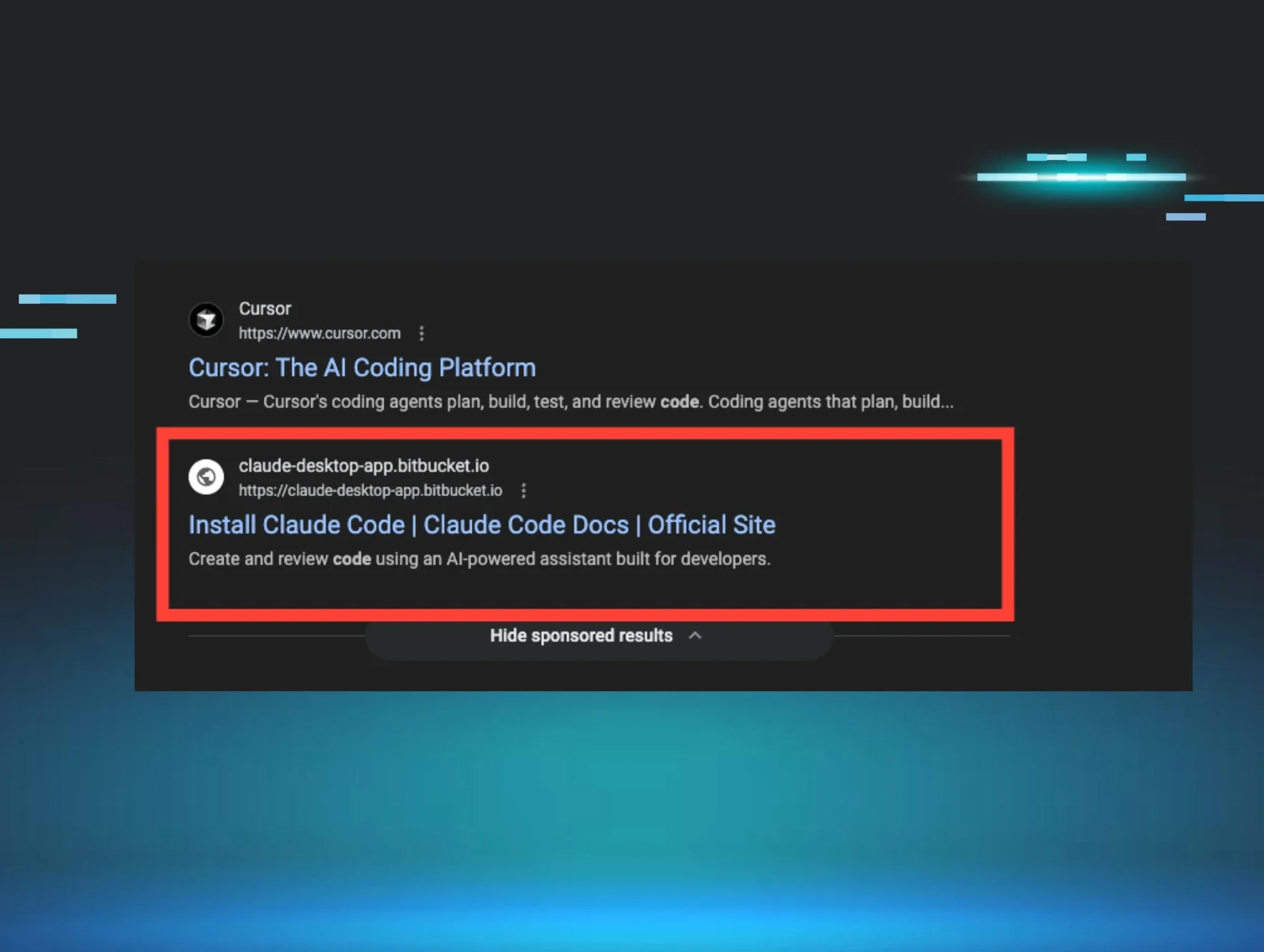

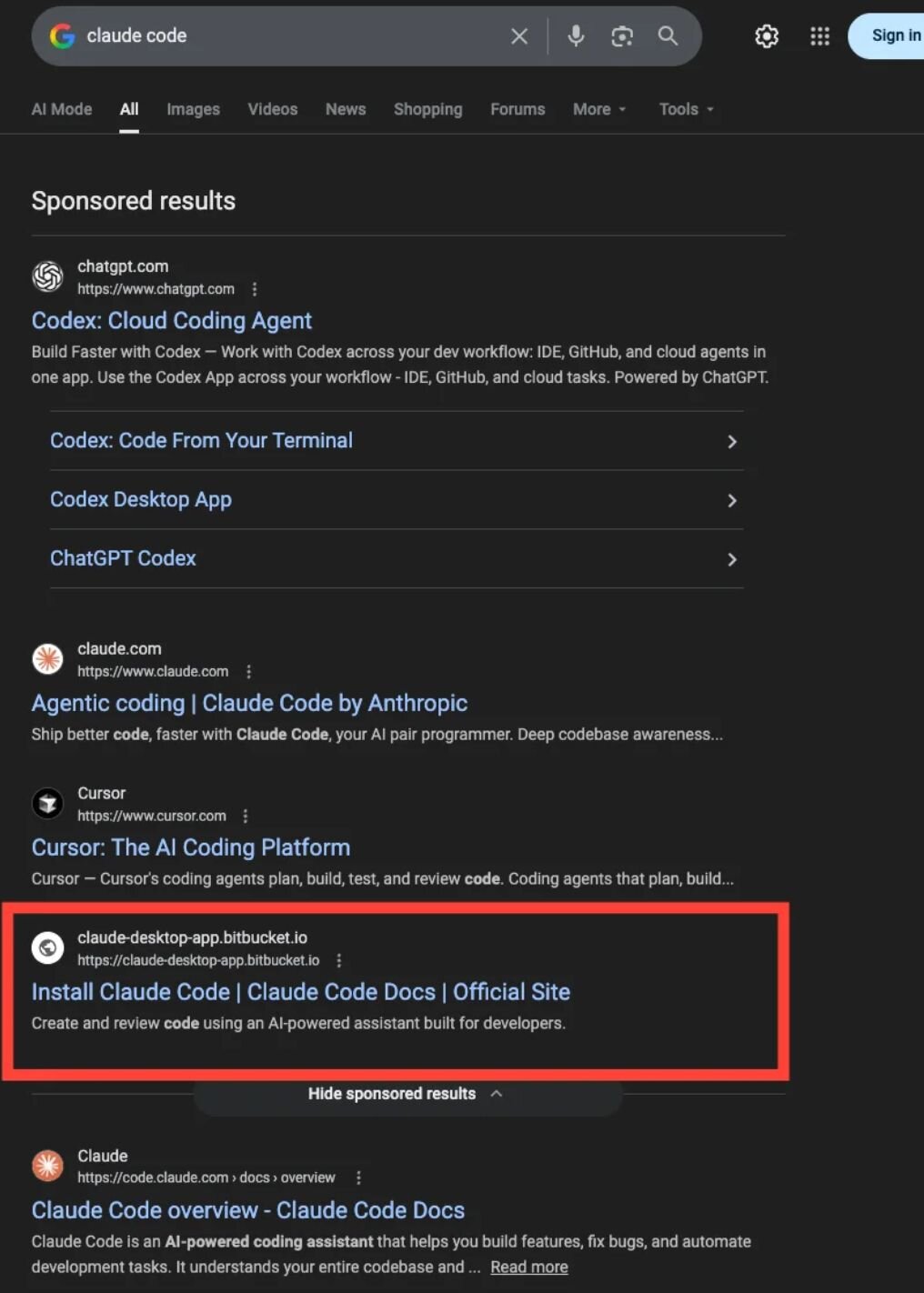

We know that attackers have always followed user behavior and adapted to exploit it. Right now, users are searching for and downloading AI tools, like Claude Code, ChatGPT integrations, and developer assistants, often multiple times a day. Things that make their workflows faster and more efficient.

So attackers moved there, too. Instead of inventing something new, they’re scaling what already works: SEO poisoning, malvertising, and convincing download pages placed exactly where users expect to find legitimate tools.

In one case, a Huntress engineer searched for “Claude Code” and clicked a top sponsored result that appeared completely legitimate. Same naming, same positioning, nothing off at first glance.

Unfortunately, it wasn’t legit. The download delivered malware designed to quietly steal credentials. The technical details were what we’d expect to see: obfuscation, staged payloads, targeting sensitive data. The delivery was something new, though.

As our Chief Security Officer (CSO), Eric Stride, put it:

"Most people don't expect the top Google result to be malicious, but occasionally, it is."

The attacker didn’t need to trick a random user. They only needed to show up at the exact moment someone in their workflow started looking for an AI tool they already trusted.

The engineer shut things down quickly, and it never turned into a full incident thanks to a fast Security Operations Center (SOC) response. But this story is a clear example that attackers are inserting themselves directly into AI adoption.

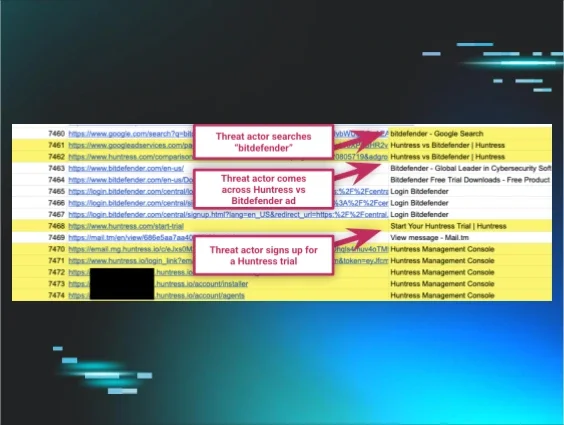

When “helpful” AI answers become the attack

The next step isn’t just getting users to download fake AI tools. It’s also about getting them to trust the instructions these tools provide.

In a recent AMOS stealer campaign, users searching for something as routine as “clear disk space on macOS” were served results that looked like ChatGPT or Grok conversations. The pages were structured exactly the way people expect to see in their workflow: clean formatting, step-by-step explanations, and Terminal commands presented as normal system maintenance.

Nothing about the response felt sketchy. A user followed the instructions, ran the command, and that was enough to expose credentials, compromise the system, and install persistent malware.

What matters here isn’t that an AI system did something wrong. It’s that attackers have recreated the experience of getting help from one to launch an attack.

As Chris Henderson, CISO at Huntress, said:

“Most incidents don’t look like attacks at first. They look like normal activity—until the context doesn’t make sense.”

That’s exactly what’s happening here. The attack doesn’t stand out because it blends in with a user’s normal online activity. It looks like the kind of AI answer you’d expect, delivered in the format you trust, for a task you perform all the time.

Phishing as a product: Infrastructure at machine speed

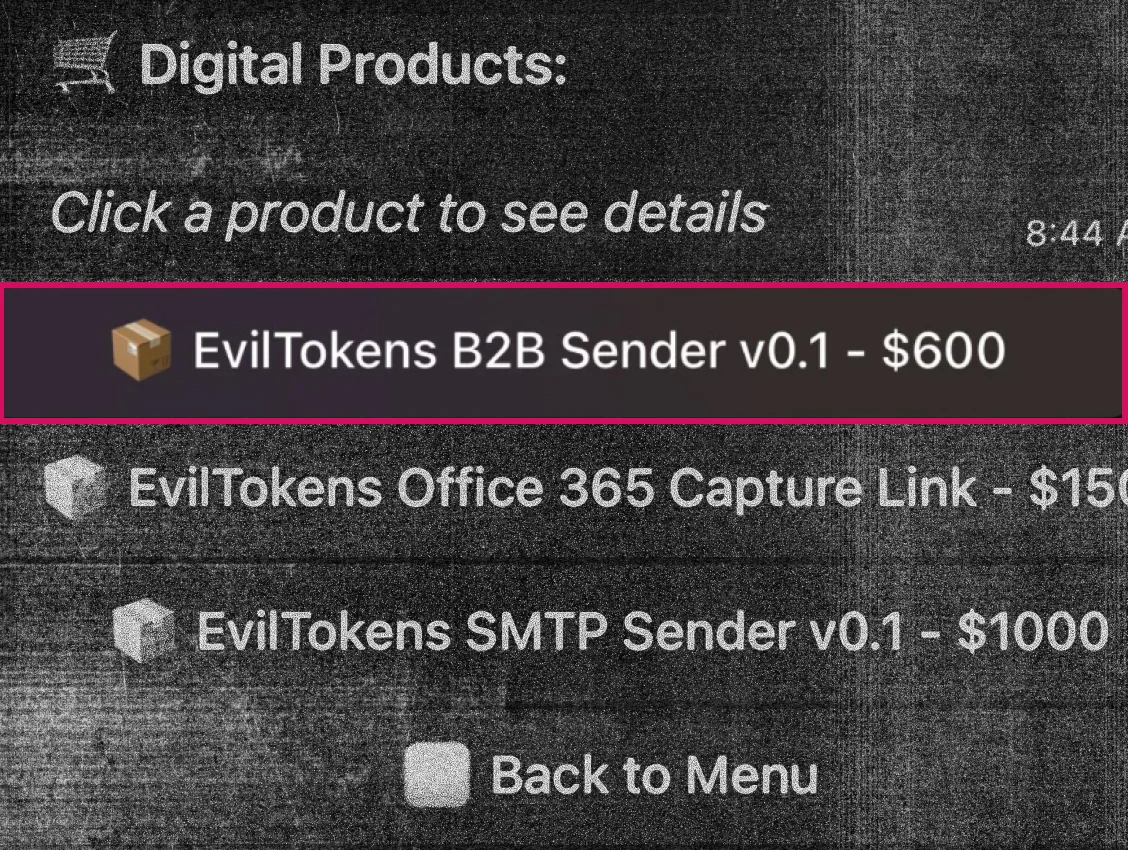

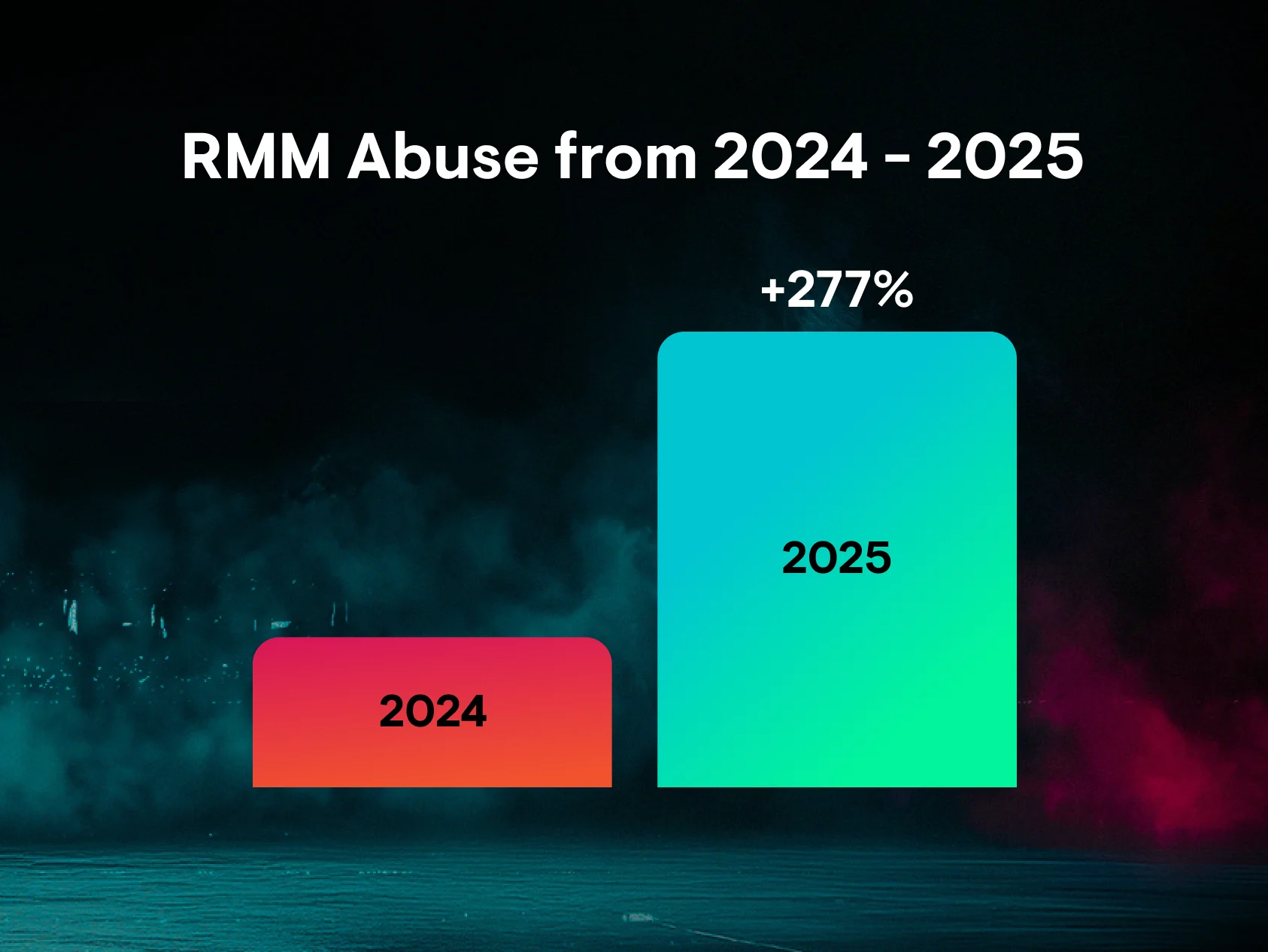

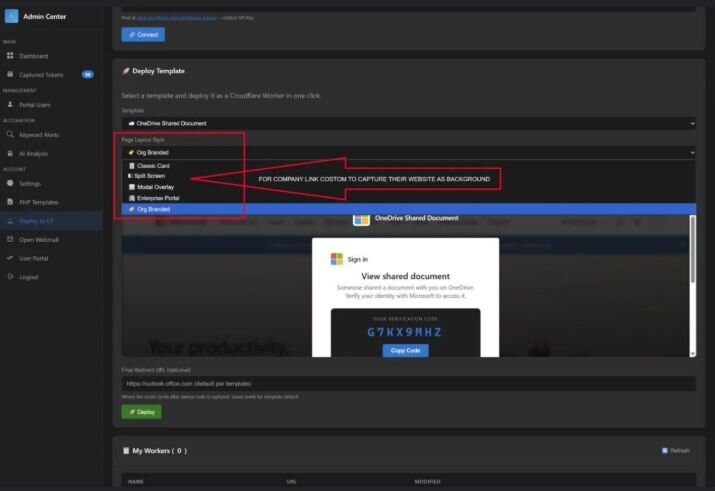

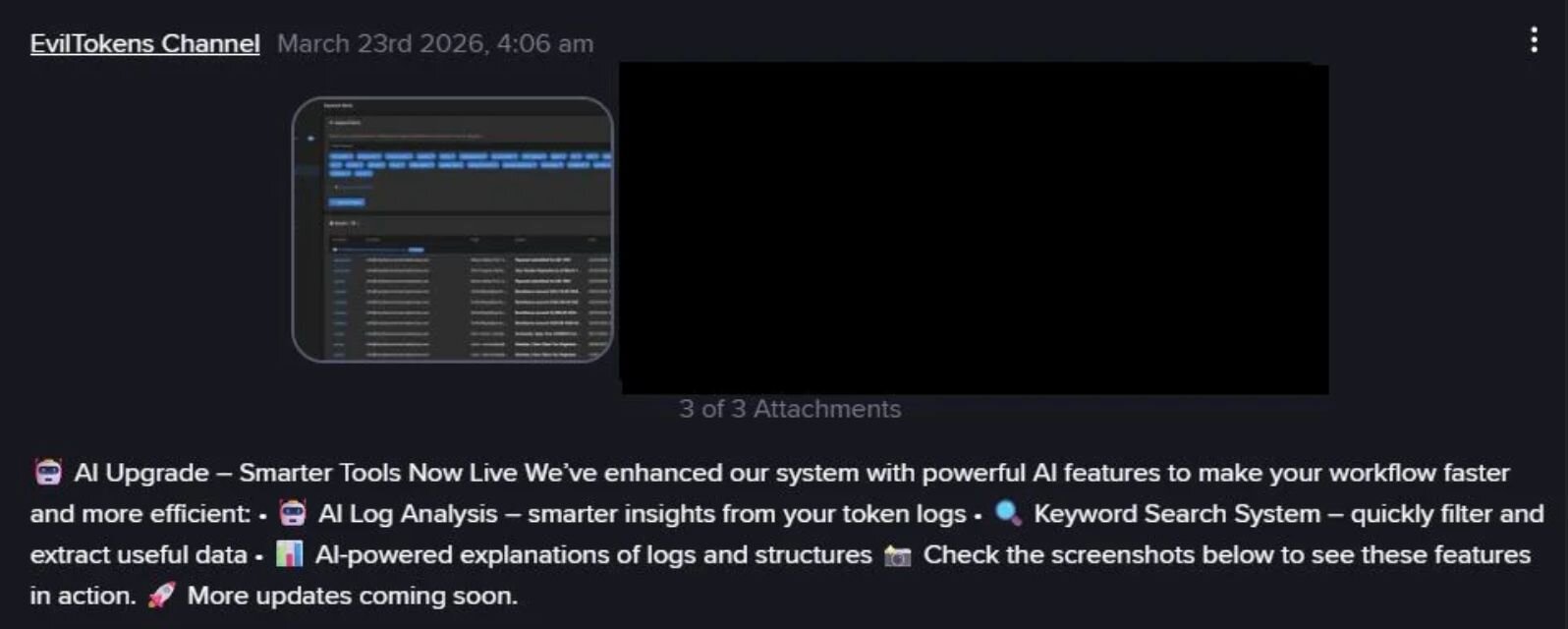

They’re not just hacking anymore. Attackers are running full-scale product launches, building and selling systems that can be reused, scaled, and adapted in real time. Operations like EvilTokens highlight that shift.

Figure 2: EvilTokens product store

By early 2026, attackers were already using platforms like Railway to spin up session token theft infrastructure on demand, impacting hundreds of organizations globally in a matter of weeks.

But the infrastructure is only part of the story. As attacks become productized, the phishing lures themselves evolve just as quickly.

Instead of relying on reusable templates that get flagged by email filters, attackers generate messages tailored to each target, aligned to roles, tools, organizations, and workflows. For example, both the B2B Sender and Capture Link products in EvilTokens support AI workflows that help bypass email filtering, tailor phishing lures, and find sensitive emails for wire fraud or data exfiltration activities. This is how attackers make each interaction feel normal because it closely mirrors how the victims’ workflows actually operate.

AI also helps attackers to correlate signals such as user behavior, authentication flows, SaaS activity, and trusted infrastructure in real time, packaging them into experiences that look and behave like normal business operations.

When attacks are built on trusted platforms and delivered through expected workflows, the line between legitimate and malicious activity blurs. In that environment, the attack doesn’t need to break authentication. It only needs to operate within it.

And when infrastructure, lures, and execution can all be generated on demand at scale, phishing stops being a campaign and becomes a system that runs at machine speed, designed to blend in so well that nothing looks wrong until it is too late.

The throughline

It’s tempting to frame all of this as simply “AI-powered attacks.” But there's more to it than that.

AI isn’t the weapon in these stories. Attackers are shaping workflows around systems people already trust, and AI is accelerating how quickly those workflows can scale and adapt.

We see that in different forms. Sometimes it’s mimicking AI-generated answers. Sometimes it means showing up in search results for AI tools. And sometimes it’s abusing identity systems at scale using infrastructure that blends into normal traffic.

The approaches are different, but the outcomes are the same. Faster, scalable, and stealthier paths to compromise that don’t look like attacks at all.

The goal for defenders is to build resilient teams that can catch what tools miss and respond before it becomes a crisis.

Join us for a special session with Microsoft

On May 5 at 3:00pm ET, we're hosting EvilTokens: Big Cybercrime’s AI Platform Built to Bypass Your MFA, a special virtual session on this exact topic featuring:

-

Casey Smith, Principal Researcher, Identity Threats, Huntress

-

Sherrod DeGrippo, Partner GM of Global Threat Intelligence, Microsoft

We'll be breaking down the Railway device code phishing campaign, what it means for identity security, and how defenders can get ahead of AI-accelerated attack infrastructure. Register now.