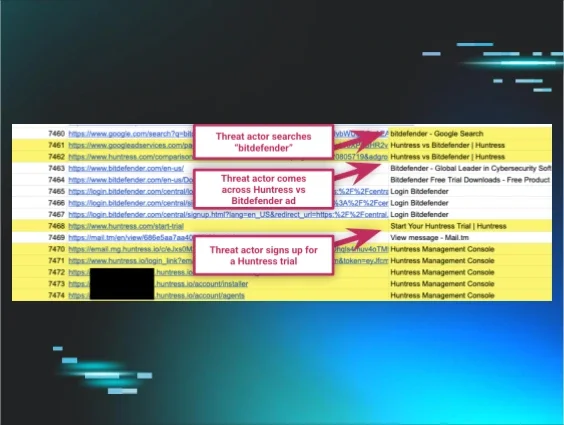

Most people expect scams and hacks to show up in their inbox or DMs, not in a place they’ve been conditioned to trust, like the top of page one on Google. But when the trap shows up somewhere that familiar, even the brightest among us can get caught off guard.

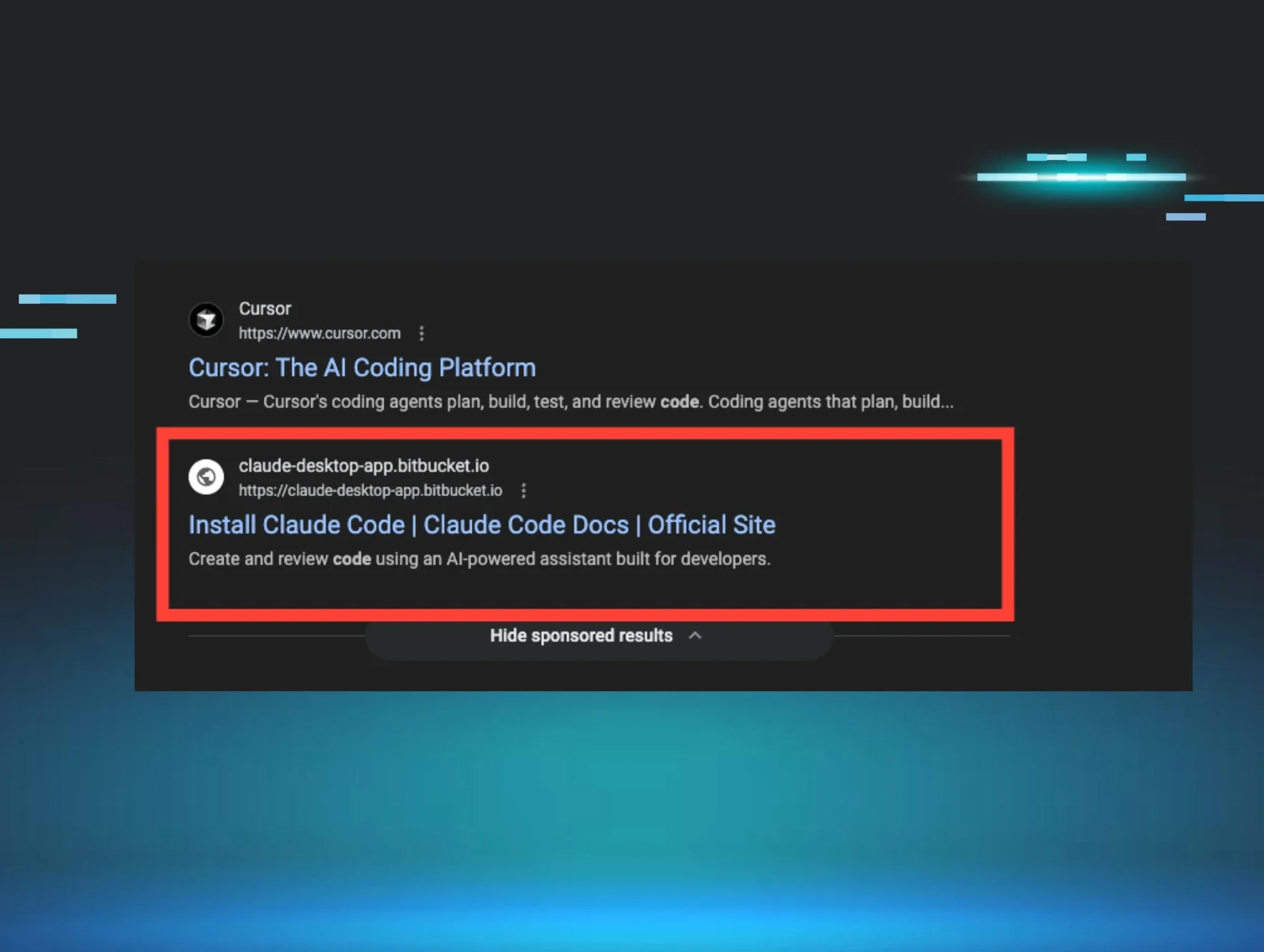

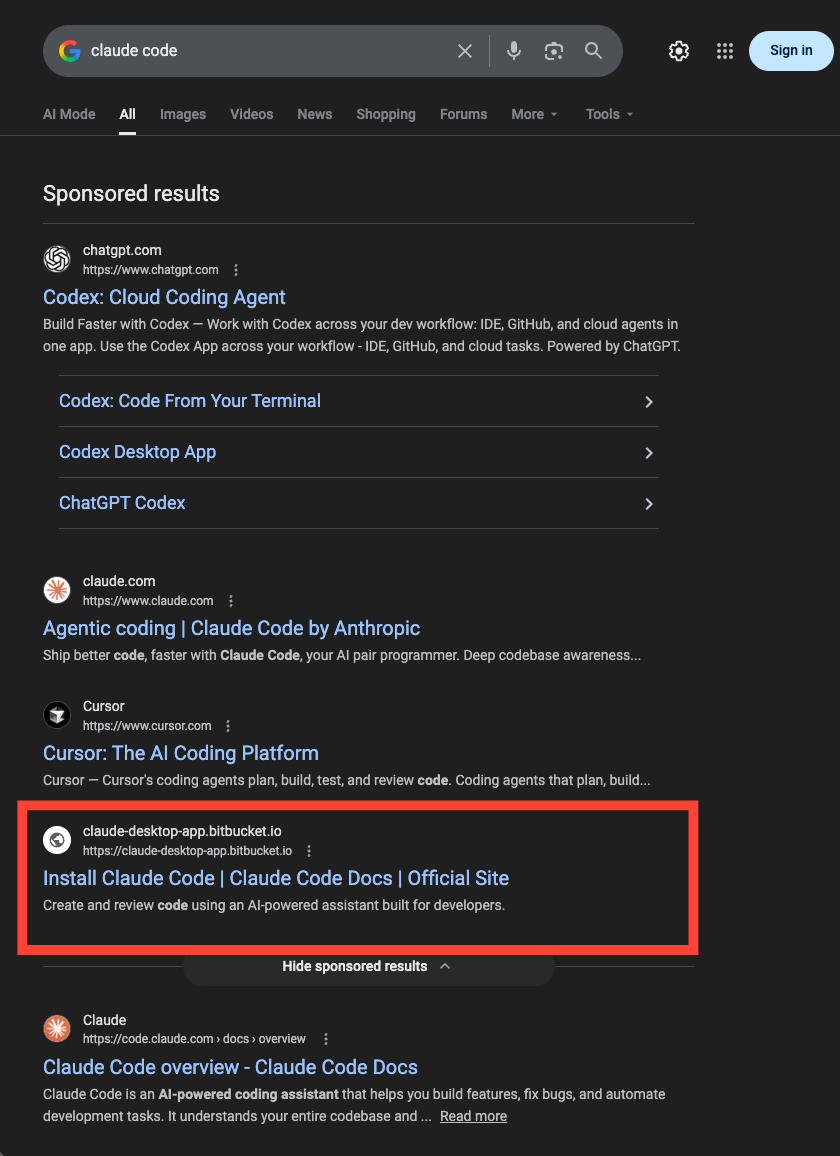

Not too long ago, a Huntress engineer opened Google and searched for “Claude Code.” He clicked the top sponsored result, and knew within seconds something was wrong. He shut his MacBook and reported it immediately. By then, the malware was already running.

As our CSO, Eric Stride, put it:

“Most people don’t expect the top Google result to be malicious, but occasionally, it is.”

A “sponsored” result at the top of a page still carries a lot of built-in credibility. If it looks familiar, many of us will assume it’s safe and keep moving.

Not surprisingly, attackers understand that habit well. They know they don’t have to fool you for long. They understand your user journey, and they know you’re likely only skimming for results that appear right. They need to set up a page that looks just normal enough for you to trust it in the middle of a busy day. With AI, this has never been easier.

The legitimate search and the questionable result

When somebody with deep security experience can get caught this way, it reinforces the breadth, efficiency, and improved quality of this tradecraft.

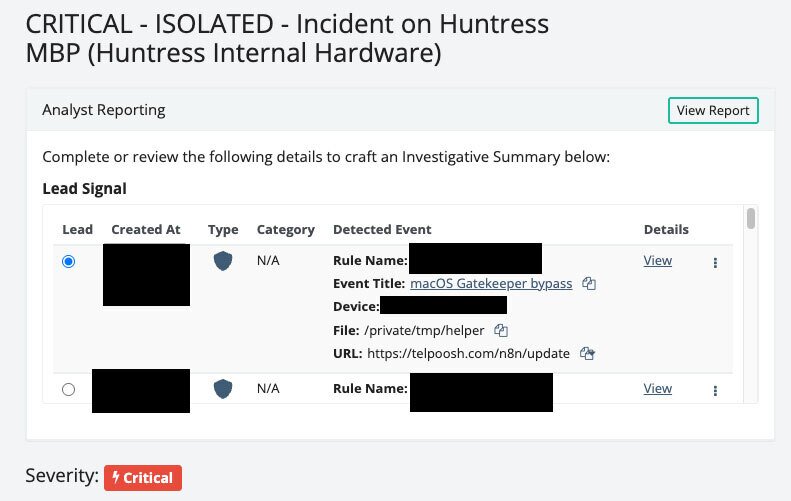

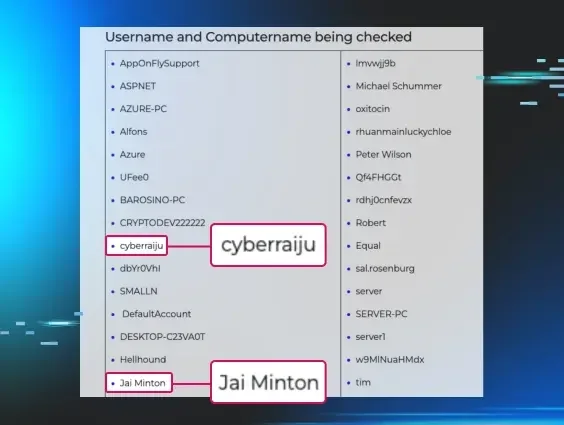

In this case, an automated script designed to steal credentials began running on the engineer’s MacBook using base64 encoding and gzip compression to disguise what it was doing. Once decoded, it pulled down a second payload, marked it executable, and launched it.

That payload went straight for the macOS keychain, specifically Claude Code credentials. Our tooling flagged the activity as illegitimate. The malware was also running an obfuscated AppleScript to make its behavior harder to read. Each layer was there for a reason.

Huntress incident report depicting adversary activity

The attackers were after credentials and the access they provide, like source code, proprietary product data, and internal systems. They didn’t get them. That’s because the engineer caught and reported the mistake immediately, and our SOC was already moving.

When he called to report what happened, the SOC was opening a ticket based on an alert the malware had already triggered. The SOC moved quickly to rotate credentials and review the logs. By the time the team worked through what had happened, there was no sign the attackers had tried to use the credentials they were after.

So why are we telling this story? Because it’s moments like this where your business’ resilience gets put to the test. Because transparency is more useful than sanitized perfection. A prominent security engineer clicked the wrong link. It happens. What matters is what an organization does in the seconds, minutes, and hours after.

A resilient security program doesn't assume people won't make mistakes. It assumes they will, and it's ready before they do. That's what made the difference here—purpose-built technology and the people around it moving fast and without ego. The same setup protecting a cybersecurity company is the same setup protecting yours.

There's an old line in security you’ve certainly heard: “It's not if you'll get hit, but when.” In 2026, that's not enough anymore. The bar is higher. It comes down to how you respond, and whether the people around you are part of the solution.

What matters is what an organization does in the seconds, minutes, and hours after—especially for vectors as prevalent as malvertising.

The engineer at the center of this story immediately raised his hand, with no hesitation. That’s what we call an “ethical badass.” He didn’t let ego get the best of him, nor did he attempt to quietly fix it himself.

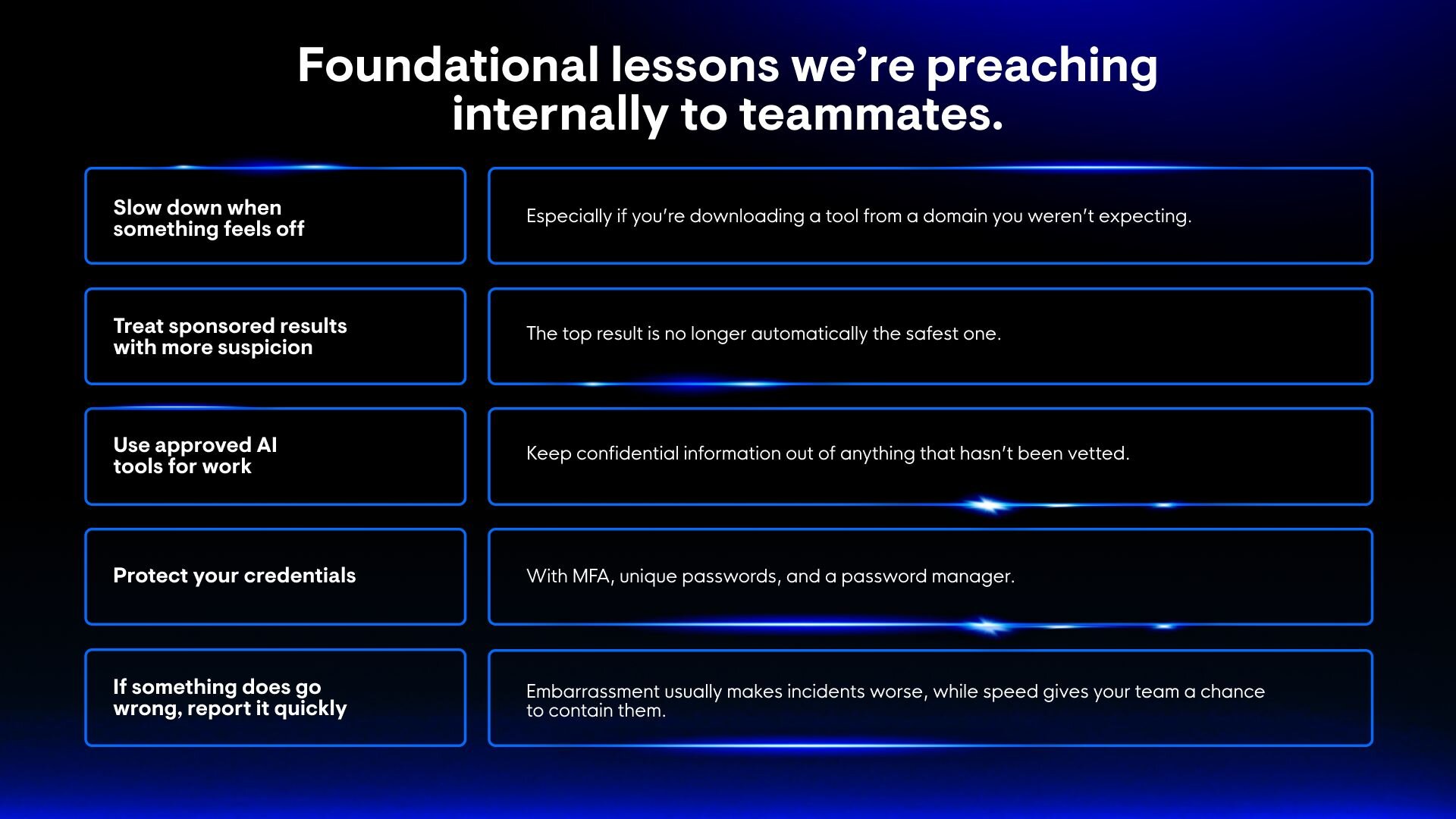

That instinct matters as much as any tool. Phishing lures are getting better by the day, and the only thing keeping pace is a culture where reporting is quickly celebrated, not punished. Destigmatizing the oops is overdue. That starts with us.