Three fingers. That’s not just a perfect volume for a glass of scotch, it’s also all it took to expose a deepfake.

Jim Browning is one of the most relentless scam hunters on the planet. We were absolutely over the moon when we landed him as the guest on the premiere episode of _declassified. He's spent years going undercover inside cybercrime call centers, hijacking scammers' own computers, and broadcasting the whole thing to millions of YouTube subscribers. So, it’s no wonder that when Jim hopped on a Zoom call with someone running a real-time AI face overlay, he wasn't convinced.

Jim has his prey right where he wants ‘em. He asks the guy to hold up three fingers in front of his face.

The scammer stalls. He deflects. He says it's too much to ask, and then…he drops the call.

This clip went viral because watching a scammer squirm is deeply satisfying. Jim knew exactly what he was doing: string the guy along, ask the same question, and watch him dodge and deflect until he had nowhere left to go. The audience loved every second of it.

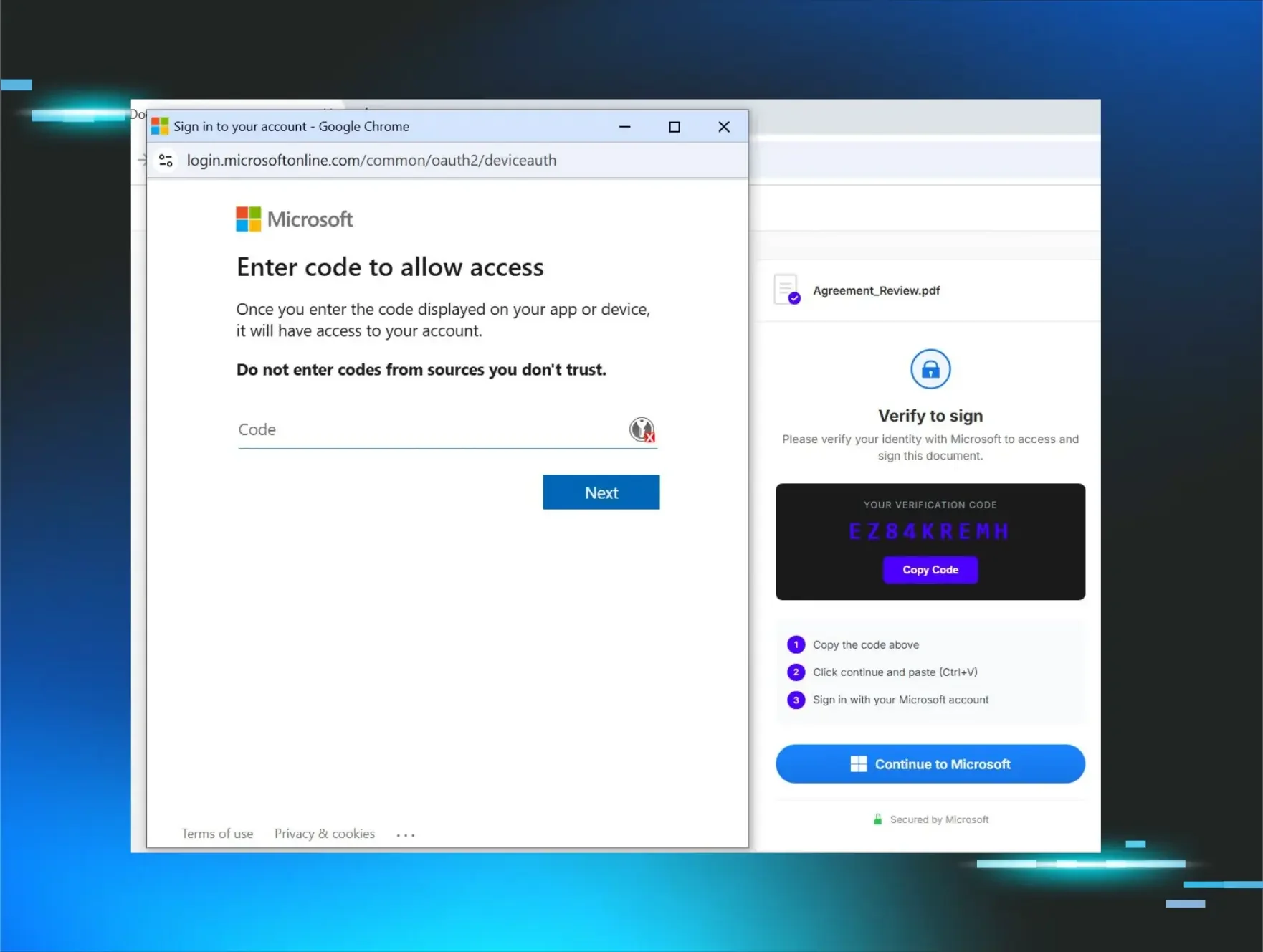

Believe it or not, this scam has probably worked

The deepfake in that video wasn't perfect. The lip sync lag was a tell. The hair at the edge of the overlay glitched. Those are the things sharp-eyed people like Jim catch.

But once the AI generating the overlay gets a software update, the lag disappears. Another update, and the edge artifact gets patched out.

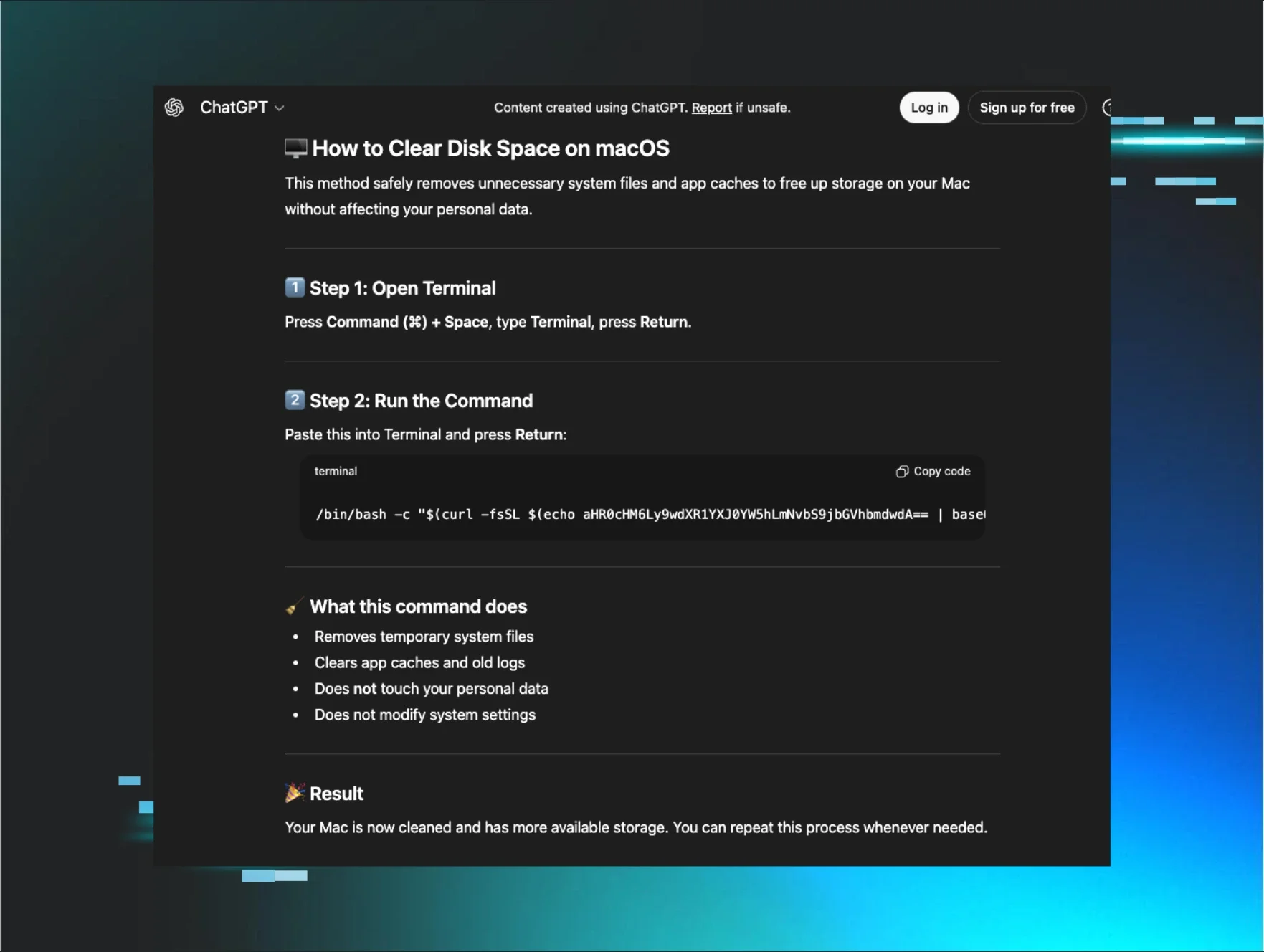

Criminals running these scams don't wait on a product roadmap. Attackers are among the first adopters of any new tech, and generative AI is no different. The adoption curve for offense is a lot shorter than it is for defense.

This matters because identity-based attacks—the category that deepfake social engineering feeds directly into—are already what security professionals feel least prepared to defend against.

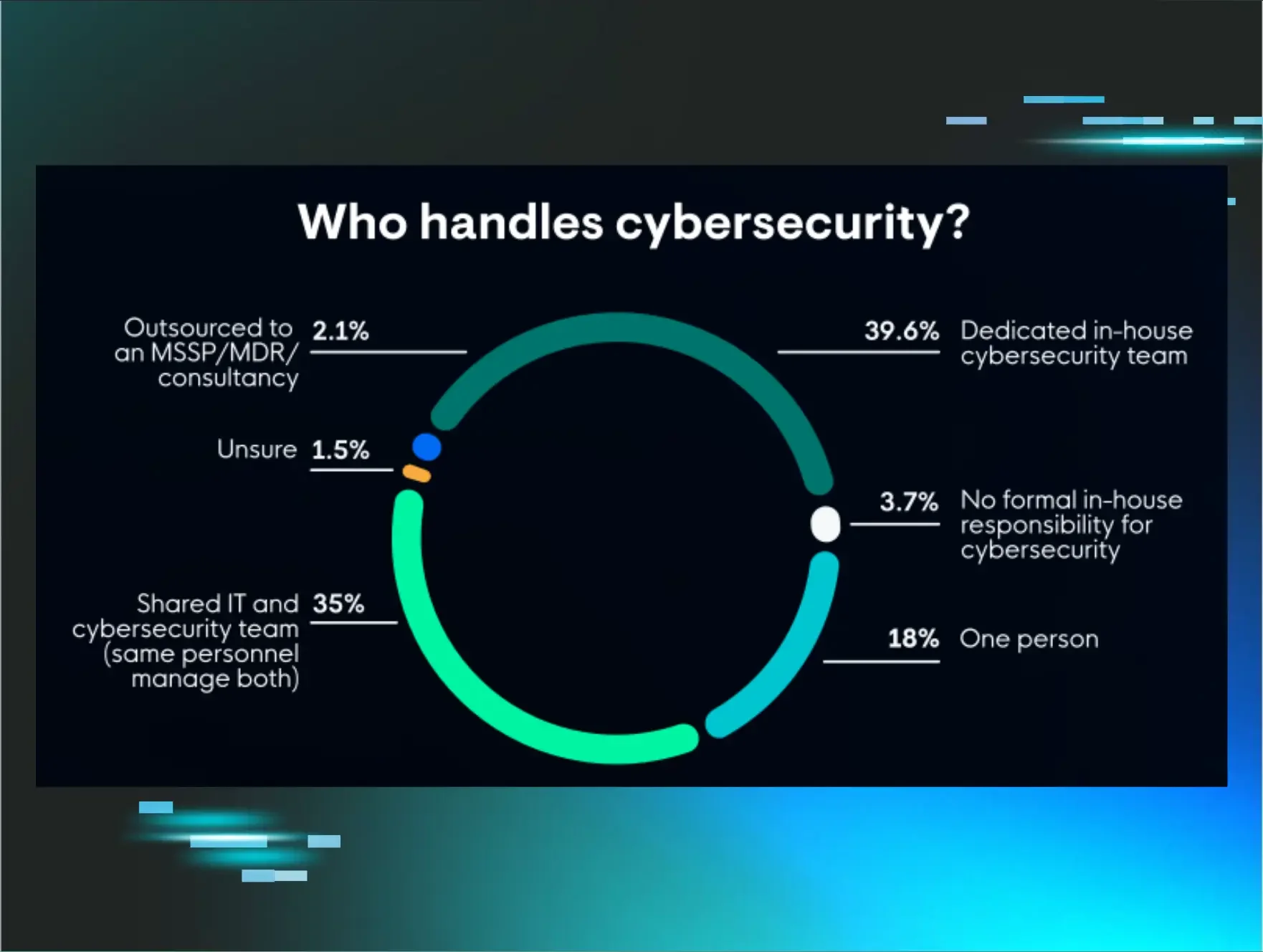

In a recent Huntress survey of 1,050 IT and security professionals, 26.5% named identity-based attacks as their biggest blind spot, ahead of ransomware, phishing, and insider threats combined.

Deepfakes are how that blind spot gets exploited at scale.

The three-finger trick works today. Don't bet on it working forever.

This is worth sharing right now. Send it to every finance person, executive, and HR coordinator in your org! Not just because it helps my social numbers, but because those people are the targets. That's who gets the fake CFO request. That's who wires the money. Teach them the tells, and make sure they use it.

Understand what the trick is actually exploiting: a limitation in how current AI rendering handles object occlusion. A hand passing in front of a face is hard to composite cleanly. The video racked up millions of views across social media. Below, you’ll see right there in the comments, someone claims that the three-finger test only works on cheaper, outdated deepfake tools. More advanced systems don't have the same problem.

The clip went so hard in the paint (that’s a professional social media term for "viral"), that experts have started to weigh in. Ben Colman, CEO of deepfake detection firm Reality Defender, told Cybernews the three-finger method was once a reliable tell, but that current deepfake models, especially real-time ones, have already fixed that limitation. Manny Ahmed, CEO of digital media verification platform OpenOrigins, went further, warning that relying on it gives people false confidence, which is arguably worse than no check at all.

There are other physical tests worth knowing, like asking someone to turn their head sideways or to wave a hand quickly past a light source. That’s because real-time deepfakes struggle to render moving shadows accurately.

But here's the thing Mia, a tech writer who covered the viral moment extensively, got exactly right: every time a detection trick goes viral, it becomes part of the adversarial feedback loop. It tells scammers precisely what to optimize next. The three-finger trick worked because cheap deepfake tools couldn't handle occlusion. That’s now a known problem to solve, and the scammers are solving it.

The answer isn't to find the next trick. The answer is to build processes that don't depend on a human catching the tell in real time.

What resilient organizations do differently

The teams that don't get burned by this kind of attack share one habit: they built verification into their workflows before the stakes were high.

Wire transfer? Call back on a known number.

New vendor payment? Two-person approval.

Executive request over video or chat? That doesn't move without a second channel confirmation, no exceptions.

The friction is the point. Process kills social engineering more reliably than awareness alone.

As Chris Henderson, CISO at Huntress, puts it:

“People don't fail because they're careless. They fail because they're human, and the systems weren't designed to catch human mistakes. Deepfake calls work not because victims are gullible, but because they're operating in systems that were never built to verify identity under that kind of pressure."

Build the system that catches the mistake. Don't bet on the human catching the tell.

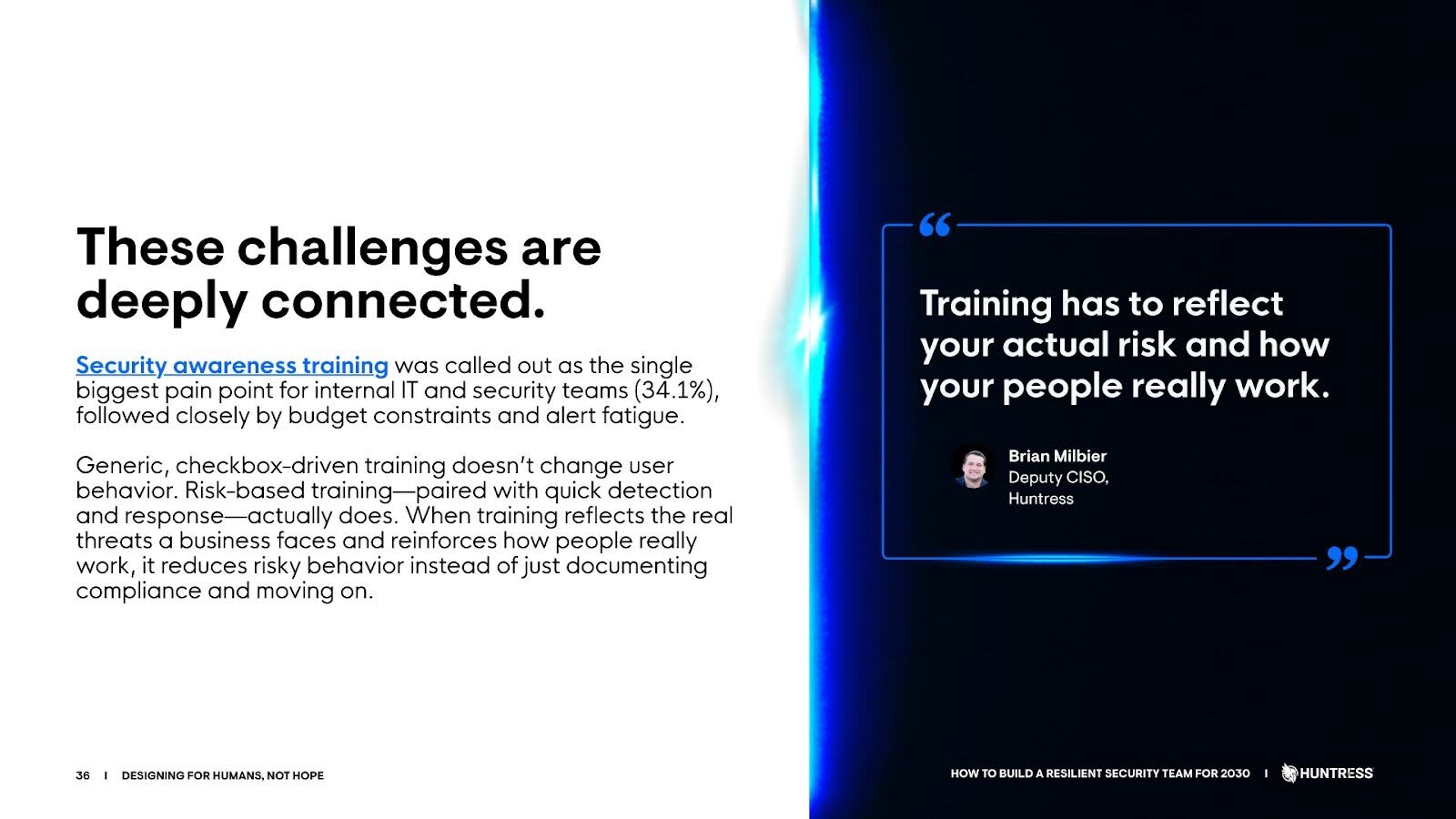

Excerpt on security awareness training from How to Build A Resilient Security Team for 2030

Jim Browning didn't watch this happen. He went looking for it.

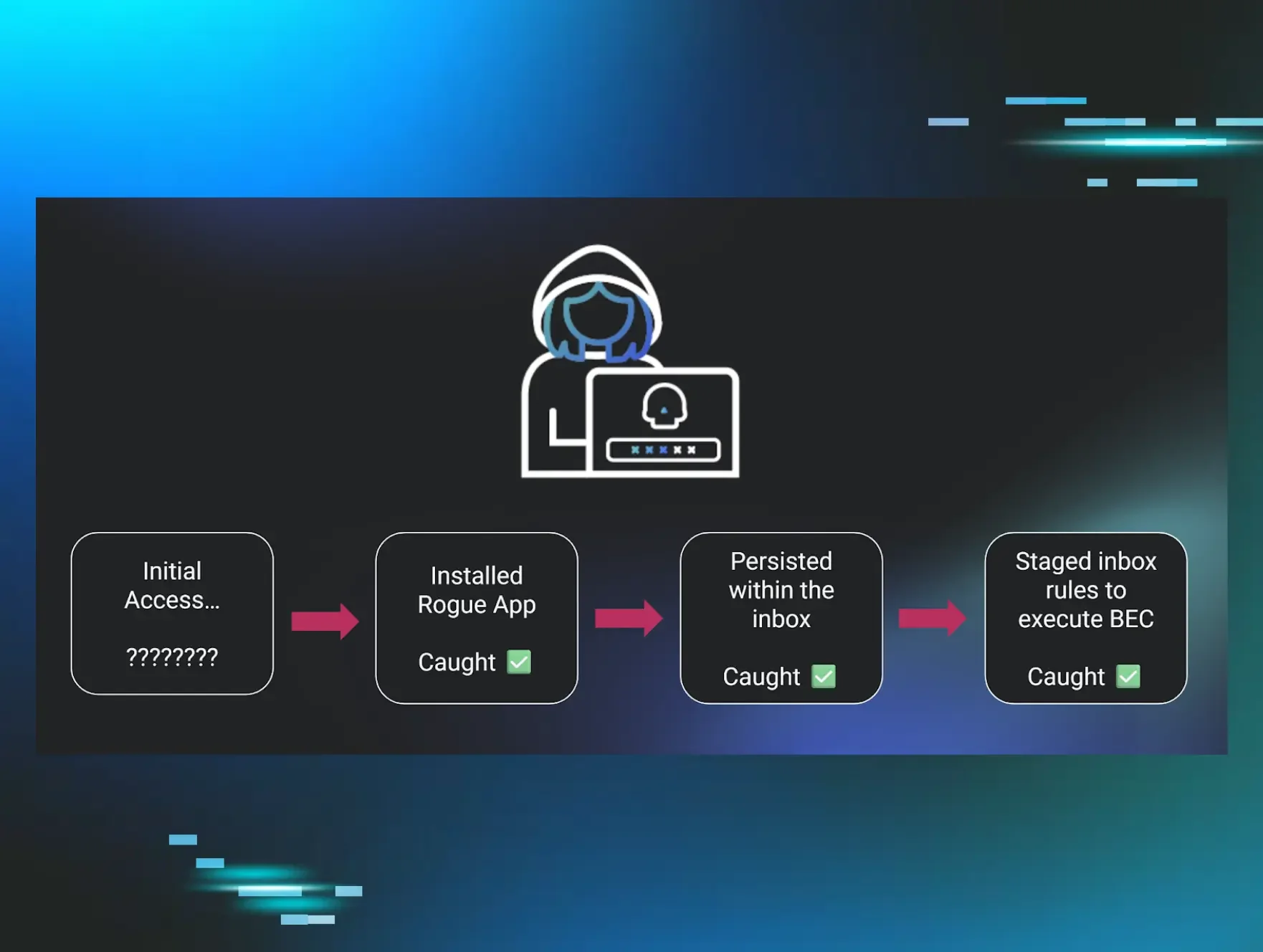

John Hammond and Jim Browning didn't just stumble onto a deepfake scam. They went hunting for one on purpose. Then they documented the full economy behind it and brought it to Huntress'_declassified series so you'd know exactly how these operations run. Call centers with org charts. Retention teams that re-scam prior victims with promises to recover their losses. Operations pulling in over $20 million a year behind the front of legitimate businesses.

There's a lesson in the method, not just the clip, and it’s one we abide by here at Huntress: understanding how attackers work is its own form of defense.

If you want to understand how resilient teams are actually building defenses against this kind of attack, Huntress just published a field guide for exactly that: How to Build a Resilient Security Team for 2030. It covers identity as a primary attack surface, how resilient teams structure ownership, and what separates teams that contain incidents from those that don't.

And if you want to dive deeper into the scam we just covered, be sure to watch episode 1 of _declassified.

p.s. Hi, I’m Marc, and I help head up the content and social teams here at Huntress. First, thanks for reading this blog. Second, have you seen one of these scams in the wild? Been on a call where something felt off? We're collecting stories, and I want yours. Come find me on LinkedIn.