At Huntress, we love to thread and share our investigative approaches to our interesting findings internally so other teams can see what we’re up to and learn a thing or two.

In this blog, we’ll go on a short journey of how we dissected a vague Managed Antivirus alert, and along the way, offer some ideas and methods for security analysts everywhere. We’ve got a good balance of Huntress-specific approaches and methods that anyone can deploy for any machine. (Insert cheesy image of a team high-five here.)

Defender Says Whaaaaaaaaat?

Huntress has long stood on the Soapbox of Justice to advocate for everyone ingesting the alerts from their AV of choice. (Are you picturing us standing atop a building with a cape flapping in the wind yet??)

Now, whilst antivirus (AV) doesn’t sound super le3t, we swear by a good AV, and we’ve shared elsewhere that often AV contains all the secrets to illuminate an adversary’s activities; you just have to roll up your sleeves and query the alert data.

At Huntress, we roll out Managed AV, and through that, Defender gives us a nudge every now and again to say, “something is going down here.” Now don’t sleep on MAV; it catches the big bad ugly ones like Cobalt Strike as much as it catches adware. The trick we’ve found for MAV—or any security product, truth be told—is to prune. Be selective in the alerts that are made salient.

The Huntress approach drastically prunes detection noise so that only the finest of threat signals end up in front of Huntress SOC analysts, and, subsequently, the partner. A tradeoff of delivering this low-noise security efficacy is that it requires some upfront time and effort investment to hammer out detections and prune back the false positives / lackluster alerts from generating needless noise.

Anyway, let's get off that soapbox and get into some threat data. 👿💪

The Possibilities of an Alert

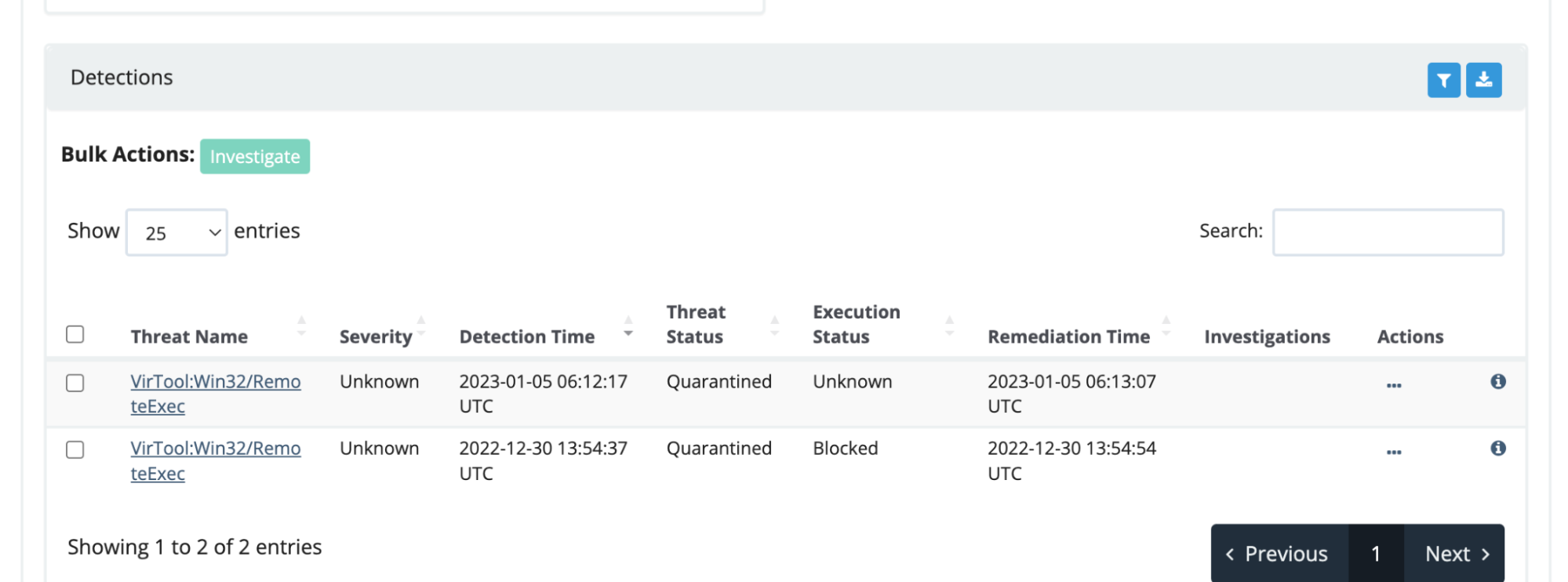

This morning the queue advised bright and early that a machine received a threat signal which Defender had neutralized and taxonomized as ‘remoteexec’.

An examination of the alert’s details gives SOME information, but it doesn’t really reveal the nature of the threat, just the form (an .exe). Instantly, a threat analyst’s mind adopts the lens of investigative theory to ascertain what the data is telling us as well as the hidden threads waiting to be pulled on.

For example, this MAV alert states the offending account was “SYSTEM,” but there will be a user underneath this. Where do we need to go to find out?

Furthermore, randomly named executables in C:\windows are a staple for lateral movement or remote beacons - seemingly corroborated by Defender’s cataloged name for this threat as RemoteExec. But, to be frank, we’ve seen just as strange alerts resolve as false positives, and whilst SOC analysts love reporting threats, we don’t love reporting false positives.

TL;DR: human context is the differentiator Huntress brings to the community.

Sure, Huntress EDR combined with an automation or two catches some real gnarly stuff, but it’s the human operator that makes a whole world of difference. Inundating a partner with endless noisy reports that are vague or vainly technical is a pet peeve of mine. As a ThreatOps Manager, I obsess over making sure that along with the tech used in detecting malice, a threat analyst is also investigating the validity of said detection and then curates a simple, concise report which conveys actionable details and data.

Pivoting from the Alert

Tell you what we’ll do; let’s put the EDR telemetry aside and instead share methods and techniques agnostic to Huntress’ stack as that’s gonna be more useful for folk who don’t work at Huntress (yet 😉). And by the way, we regularly share Huntress-agnostic approaches to solving security intrusions, so we’re not just showing off for the blog!

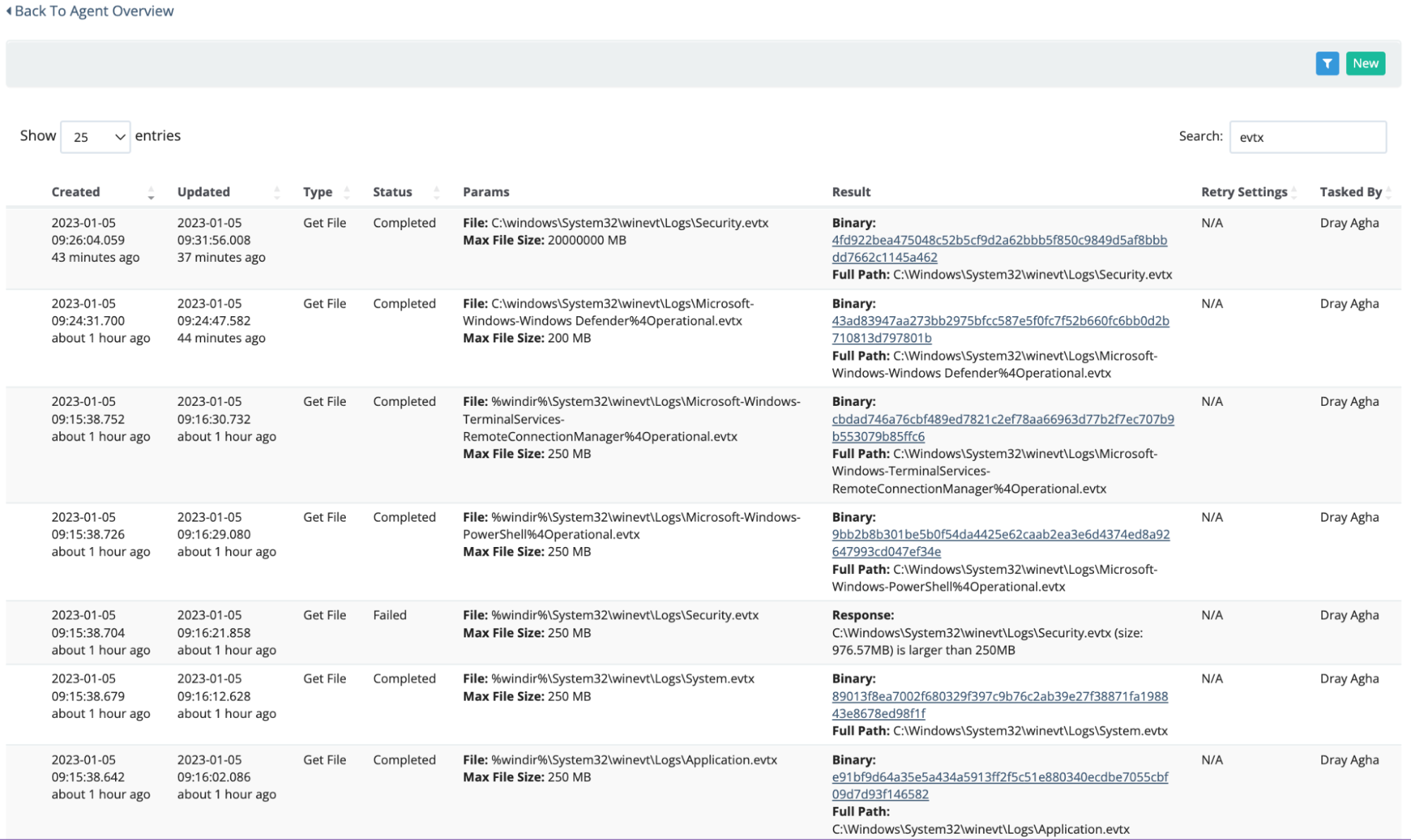

After receiving an alert like this, our first moves will be to collect some choice default forensic telemetry on a Windows machine:

- The Windows Event Logs (WEVTXs)

- Prefetch and PowerShell history

- Nothing ended up being relevant in here, but we were looking for evidence that any of those EXEs in the MAV alert executed, as well as other suspicious executables in the time window and for evidence of any PowerShell the adversary may have run

If you’re interested in knowing a bit more about these artifacts, do we have a webinar for YOU!

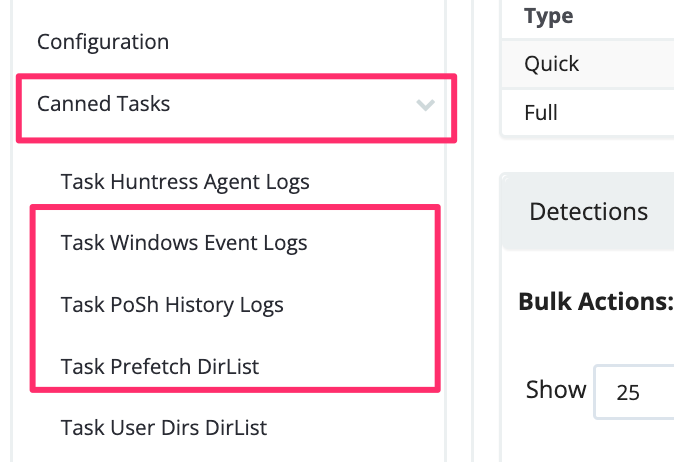

Huntress already has dedicated ‘canned’ tasks that make life easier for us when it comes to collecting these in one click.

We’ll focus on the Windows Event logs, as those yielded the most relevant data. Sure, some security vendors get a lil nervous when it’s time to leverage Event logs for security analysis, but at Huntress, it really is second nature. 😜

We can use Chainsaw, which essentially allows us to quickly ‘grep’ through the WEVTXs. Chainsaw does have a summary ‘hunt’ mode, but don’t rely on just this feature alone. Instead, Chainsaw’s search mode is an advanced and surgical way to deploy the tool. Moreover, if you’re looking to slice quicker through WEVTXs with specific queries, Harlan’s Event Ripper will be a treat - especially if you’re battling Qakbot.

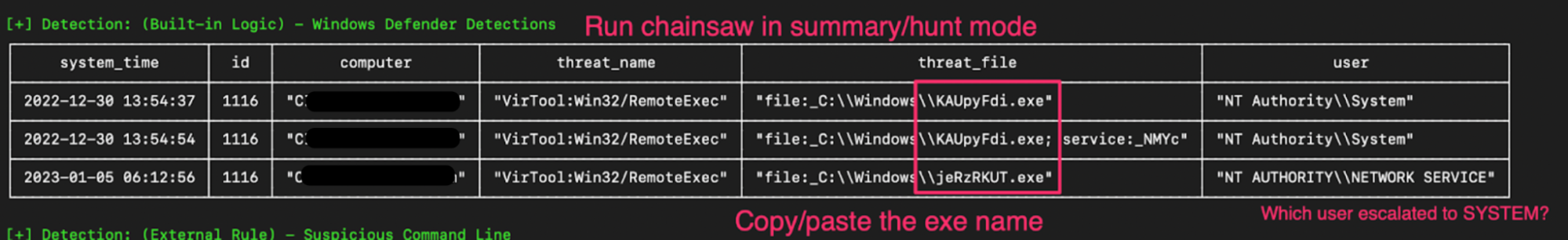

We can start by running Chainsaw in ‘hunt’ mode, which offers us a sigma-rule-based summary of what’s going on in this data. But there are some limitations with this method.

- The major one is not every result will be malicious. A discerning analyst can sift through the noise, but it’s still not always clear what is ultimately legitimate.

- Also, sigma rules aren’t going to be perfect, and not all malicious data or relevant data will be included in the hunt mode. An analyst who relies on the hunt summary data is probably missing out on the remaining 80% of the threat data from the intrusion.

Nonetheless, Chainsaw’s hunt mode rips through the Windows Defender WEVTX. Here, we’re given some more context on the Defender alerts we saw in the Huntress dashboard. Now we can copy/paste the name of the executable and prepare to detonate Chainsaw in search mode.

Ask Specific Questions

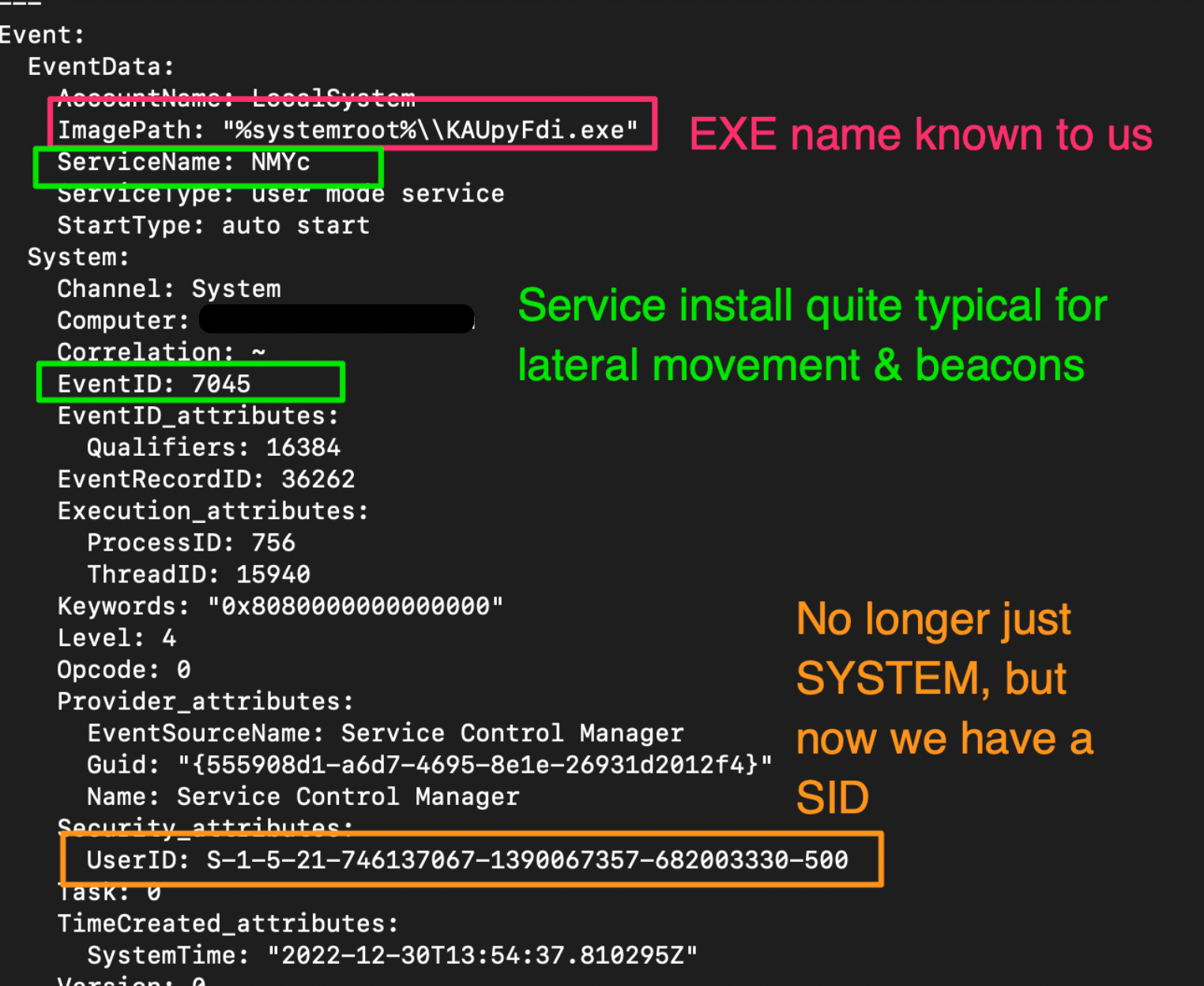

Running chainsaw search ./ -s "KAUpyFdi" -i will essentially ‘grep’ through our collected WEVTXs, and when that string appears in one of the event entries, the entire XML is printed for us.

One of the XML results was incredibly interesting.

Here, we corroborated our earlier thoughts when we saw the MAV alert and wondered if this was some kind of service install. Event ID 7045 is a signpost for a service install.

In addition to the service install info, we also get greater clarity on the whole SYSTEM account thing. We get a User SID—a unique string assigned to a user account on a Windows machine.

But we have some outstanding thoughts:

- What is the corresponding user name for this SID?

- Was the executable Defender detected, attempting a service install, some kind of beacon or lateral movement relic?

Tracking Down Lateral Movement

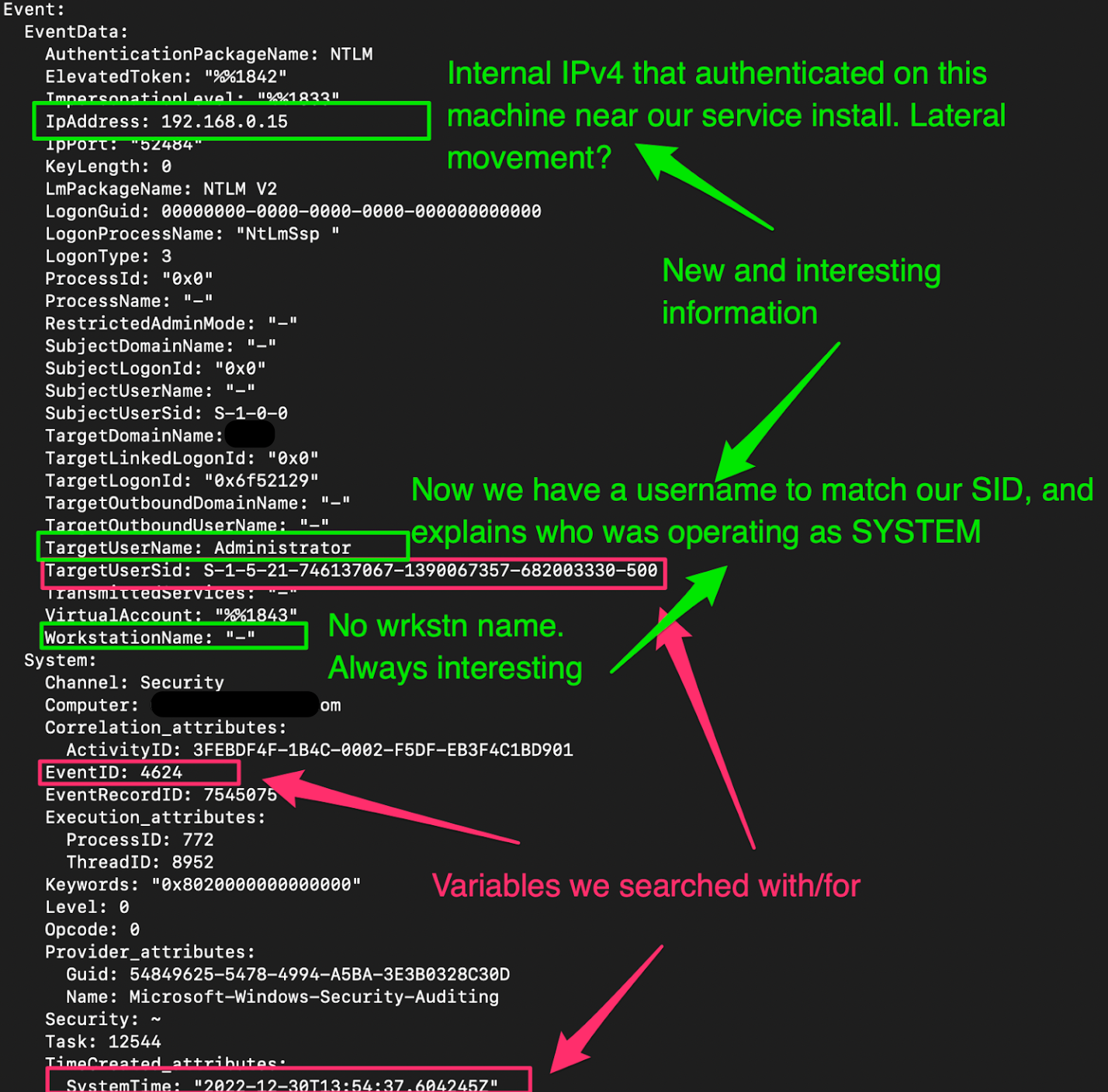

Now, we need a username and to see if there is any Event ID 4624 evidence of a machine that this user account moved across from.

But, to be clear, 4624 data and timestamps have to be closely correlated if we want to claim evidence of lateral movement. If there is a log in 30 minutes prior to the service install, that is a tenuous link to lateral movement. What we are looking for is a 4624 as close to the service install as possible. Maybe even essentially simultaneously.

We can use Chainsaw again, searching a timestamp incredibly close to the earlier identified service install (EventID 7045), and filtering by 4624 successful authentication.

chainsaw search ./ -s "2022-12-30T13:54:37" -e 4624 -s "S-1-5-21-746137067-1390067357-682003330-500" -i

We get results back that corroborate things we already knew but offer some really insightful new findings:

- Our timestamp for the 4624 authentication is essentially the same for 7045 service install. Compounded with the Logon Type, this is indicative of lateral movement

- We have leapfrogged from understanding the offending account as SYSTEM, then just having the SID, and now finally we have an account name: Administrator

- We do not get a workstation (host/machine) name, but we do get an internal IPv4

Cool, so our hypothesis based on the evidence so far suggests:

- Someone controlling the Administrator account deployed some kind of lateral movement from 192.168.0.15 to the machine.

- This lateral movement led to an attempted service install that Defender neutralized.

Reviewing the Huntress agents installed in this network, none report having that private IPv4.

This means one of the following:

- This organization uses dynamic IP allocation, and therefore what was 192.168.0.15 has now changed ➡️ possible, but unlikely

- We do not have an agent on the machine with the internal IPv4 192.168.0.15 ➡️ most likely.

So, does that mean 192.168.0.15 is the adversary’s machine?

What Was the Defender Alert?

So now our minds are really ticking. Let's review our findings.

A LOT of offensive security tools use this lateral movement for all kinds of moves—we’re talking Impacket, Cobalt Strike, Havoc—all the cool kids. We’re seeing weird, sus activity indicative of the above malice with a privileged account (Administrator) who is acting from a machine we do not have an agent on (192.168.0.15).

But equally, we lack a lot of evidence to make some claim here. We can’t rule that this is malicious. We’ve leveraged a constellation of different artifacts, but we’re not really able to make an authoritative claim that this is evil or adversarial.

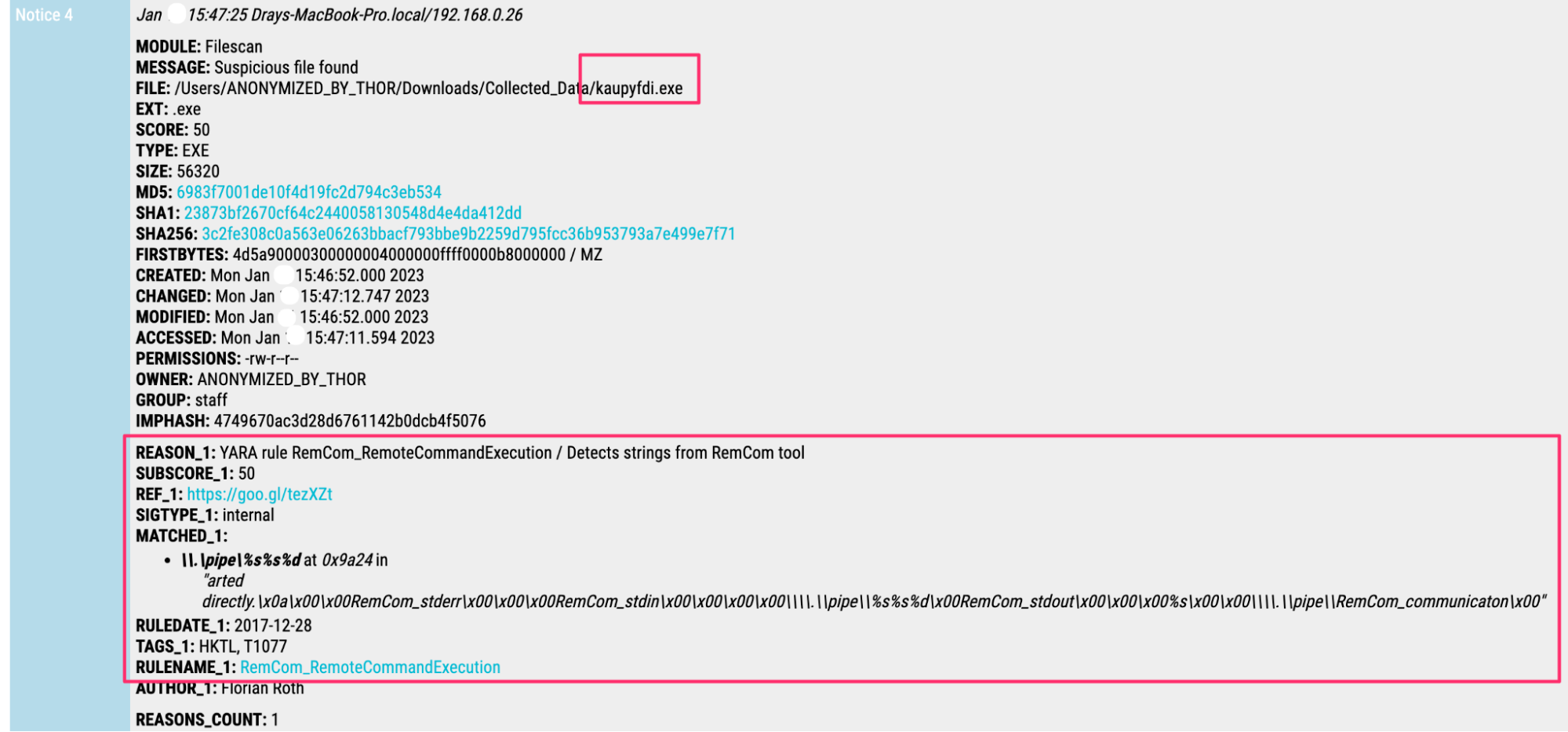

We can examine the offending file(s) that Defender neutralized. We can retrieve the files that Defender quarantined and subject them to malware analysis. Rapid malware analysis with Thor details that this binary was associated with remcom.

- You can think of remcom like psexec (kinda sorta)

This is not the smoking gun that it otherwise could have been.

But it also could be completely legitimate, as we have discussed in the past for other binaries / services associated with lateral movement.

The Importance of Reporting

So where do we go from here?

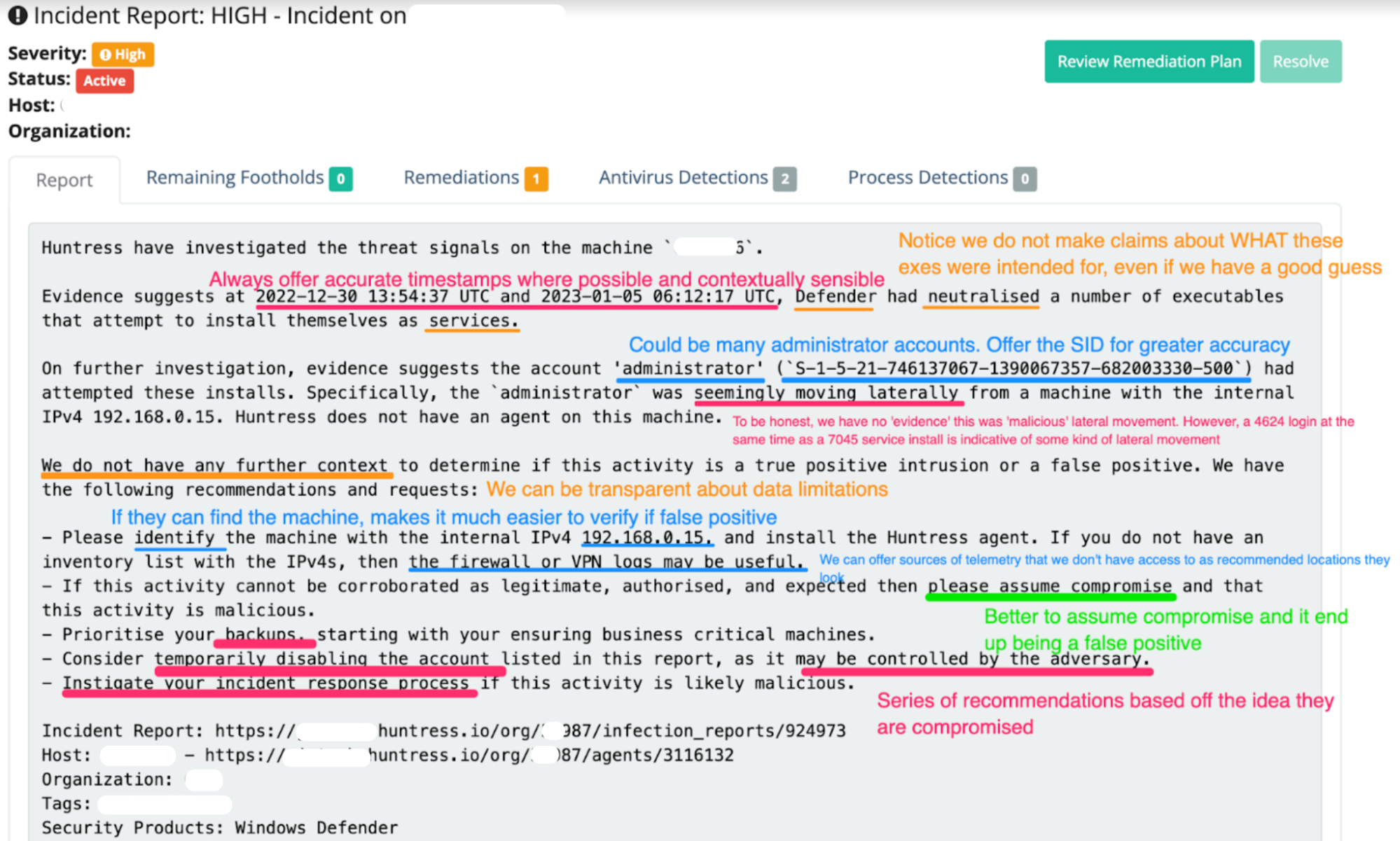

The evidence can be counted up and interpreted as malicious lateral movement, neutralized by Defender and unraveled by the Huntress Security Operations Center (SOC)… but equally, the evidence could be contextualized by the partner as legitimate and therefore not a security threat.

Earlier, we briefly alluded to investigation theory. Regardless of the exact theory, we subscribe to the belief as cybersecurity investigators that we all require guiding cognitive concepts for exactly these situations. These theories are all based around letting the evidence speak rather than allowing the analyst to impose their hypothesis as the truth.

Two key figures leading the conversation here are Katie Nickels and Chris Sanders. The aim of such guiding principles is to set oneself parameters and processes of investigation methods and analysis with an aim to provide better rigor and reliability and overcome limitations of bias or assumption.

Now, we don’t need to start drawing tables and graphs and do anything too wild to adhere to such a guiding principle. For our case, we need to remember our ultimate objective: summarizing and advising our partners. Our incident report doesn't need competing hypotheses or addendums about our investigators’ bias. We just need solid technical summaries of what we have observed and further actionable recommendations based on those observations.

In this case, we wrote a report for our partner based on our findings and then annotated it for this blog.

The annotations are for sure a bit ‘Pepe Silvia', so don’t engage with the madness too much. You don’t have to read it all anyway, as we have some of the guidelines and takeaways we had in mind when writing for your reading pleasure below:

- Be accurate where it makes sense for the partner to take action on their side, like timestamps, user accounts, hostnames, and IP addresses).

- Do not be needlessly technically accurate where the partner cannot take action or it doesn’t make sense (for example, it is probably not necessary for me to go into detail in the report of what remcom is, how it works and what it’s similar to).

- Offer actionable recommendations for the partner where it contextually makes sense. Notice that I don’t ask the partner for a whole shopping list of things, but still ask for responses appropriate for the context.

- Find the 192.168.0.15 machine and install a Huntress Agent on it or identify it as unknown; if they feel sus about the activity, prioritize their backups; temporarily disable the specific account in this report so the adversary cannot control it.

- Speak with and through the evidence. Notice that this report opens most sentences with phrases like “evidence suggests.” This is no accident. Also notice that when we don’t know something, we don’t claim it (for example, we do not call the lateral movement malicious).

- These seem like just arbitrary semantic gymnastics, but we assure you that an authoritatively concise, well-evidenced report that is free of assumption hits different.

- A reader is likely to take the threat seriously because they are taking YOU seriously. You are conveying yourself, your analysis, your findings and the consequential recommendations as reputable and knowledgeable.

And That’s All We’ve Got for You

This investigation took longer to write up than it took to deploy and analyze. 😂 But it felt appropriate when a number of techniques, tools and tips were leveraged in this investigation that we perhaps take for granted as reflexive.

Now nothing we do here at Huntress requires a PhD in hackology. We just have clever, dedicated, hard-working people across all the teams. And in the infosec community, we have an incredible collection of people, tools, and docs that can teach you to conduct security investigations with the same lethality, tenacity and accuracy that Huntress strives for.

Lacking in most people—often—is confidence. We see it again and again, in and out of Huntress. Personnel have the capability but lack confidence in their execution and method.

To be transparent, a crisis of confidence could have crept up so many times during this investigation:

- What if we are wrong about this being malicious lateral movement?

- What if we are wrong and the service successfully installed, Defender didn’t stop it, and the partner is currently compromised?!

- What if we are totally wrong and this is all an obvious false positive and we look stoooopid?!

Those thoughts cross our minds for sure.

We would argue that the normal analyst doubts themselves 40% of the time and trusts their sauce 60% of the time. At this stage in the game, we at Huntress trust our sauce 95% and doubt ourselves 5%. Not because we’re infallible, but because we trust that we will keep leveraging the constellation of telemetry and keep trying investigative approaches until we can find some evidence to latch onto and a thread to pull on.

As Senior ThreatOps Analyst Matt Anderson said not too long ago:

“If you don’t have a good process, your results won’t likely be good; if you have a good process, your results will likely be good.” 🤯

We hope this blog encourages your team to try new techniques or corroborates that your current techniques and processes are good.

You have to trust your own sauce, my friends. If you’re curious about the ingredients that make up Huntress’ sauce, check out our Tradecraft Tuesday webinars.