Hunting is a tale as old as time. Humans have been hunting for thousands of years—whether it be against prey or foe. While the hunt looks a bit different in the realm of cybersecurity, there’s one thing that still applies—nothing beats human instinct.

Now you may be thinking, “what about human error? Isn’t that what attackers exploit?” Yes, but that’s not what this blog post is about.

We’re talking about cyber threat hunting. Many security tools rely on automation to do some sort of threat detection but many are missing that human element. In this blog, we’ll dive into what threat hunting is, the differences between human analysis and automation and an example of human-powered threat hunting in action.

Threat hunting is the practice of searching for cyber threats that are lurking in the shadows. It’s grown to become an essential component of any cybersecurity strategy. Threat hunting focuses on finding indicators to help you hunt, then you need to validate your indicators are working, rinse and repeat.

But much of the challenge is that you need a certain level of confidence to confirm you’ve identified something that is actually malicious (or at least something you want to investigate). Otherwise, you get false positives and potential service disruption.

So what’s the answer? Adding humans into the mix.

Cyber defenders will always look for opportunities to automate—it’s critical to keep up with the pace and scale at which attacks are growing, both in sophistication and in sheer volume. At the same time, there must be a human element to assist in recognizing and hunting down threats.

When it comes to identifying malicious activity, context is key. If automation could handle this on its own, then we wouldn’t even need this blog post. But for argument’s sake, let’s take a look at the pros and cons of automation vs. human analysis:

In my opinion, the real value of human threat analysis stems from two things: context and experience.

If a human recognizes, "hey, a cmd.exe program is running as a child process to my Microsoft Word program" they have the know-how that this is very suspicious and shouldn't be happening. Obviously, that is a simple example—automated solutions could detect that strange behavior, but only because a human programmed that software to know that is bad. What about the things that automation hasn't "learned" about?

Some forms of obfuscation or evasion techniques can still slip past an automated solution—and it demands a real human being to work through it and understand it—because the automated program will just ignore it and move on to the next thing.

Now, it doesn’t have to be one column or the other. Automation is certainly key, but it has its limitations. As does human analysis.

The key could be combining automation with human intelligence, and I think my fellow ThreatOps teammate said it best on the matter:

“When it comes to threat hunting, humans and automation should complement each other. We simply cannot rely on one over the other because they each have a part to play. Automation is great for certain aspects, such as catching and flagging known malware patterns. But a human analyst can decipher what is truly malicious or spot a command that was designed to evade antivirus. In my opinion, threat hunting is strongest when you have automation and human analysis working together.” - Cat Contillo, Huntress ThreatOps Analyst

ThreatOps is the synthesis of using not only automated security detection algorithms, but also manual and human analysis from security practitioners’ reverse engineering and understanding malware. The real strength comes from the human analysis, while both techniques supplement each other and make for better detections than just one strategy alone.

At Huntress, our Security Operations Center (SOC) team gives us the ability to look at the context and environmental factors to decide if something is malicious or not, or even catch something that slipped past other preventive tools. This tight feedback loop allows us to operate at the speed of attackers. As soon as a new malware variant emerges, an analyst can appropriately classify it and retroactively apply it to all systems.

Our blog is full of examples that showcase the power of human threat hunting—but there are hundreds more that we just haven’t been able to write about yet.

To give you a taste, we’ve come across things like fake antivirus programs where malware masqueraded as a "kaspersky.exe," and even layers and layers of nested obfuscation that some automated scanners couldn’t work their way through. We’ve dealt with fileless malware, or that one time a VBScript called JScript which then called PowerShell to download and reflectively load in C# assemblies—the whole gamut.

If you really want to see our SOC team in action, let’s dive into this example of Emotet, a well-known trojan that continues to evade security products—which CISA warned us of last year.

For background, Emotet is a common malware delivery mechanism nowadays, often dropping multiple malware strains onto a compromised host. Sometimes, it’s other well-known strains such as Trickbot or Dridex, other times it’s highly destructive ransomware like Ryuk. The good news is that Emotet isn’t invisible; it does leave traces that can be detected if you know what to look for.

As described in this alert, “Emotet artifacts are typically found in arbitrary paths located off of the AppData\Local and AppData\Roaming directories. The artifacts usually mimic the names of known executables. Persistence is typically maintained through Scheduled Tasks or via registry keys. Additionally, Emotet creates randomly named files in the system root directories that are run as Windows services. When executed, these services attempt to propagate the malware to adjacent systems via accessible administrative shares.”

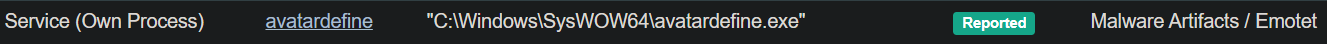

Emotet service entry

Emotet can often appear as a service, usually as two words chained together. The other giveaway is that the Emotet service copies a service description from another legitimate service on the host.

Examining the “Service Manager” snap-in for duplicate service descriptions can reveal these malicious services. When executed, these services attempt to propagate the malware and move laterally through the network via administrative shares.

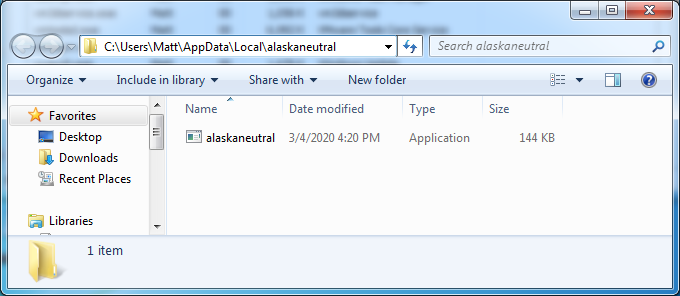

Emotet Persistence

In addition to services, Emotet has been known to use Scheduled Tasks and Registry Run keys. Perhaps a good task for automation—but how can an automation engine know when it’s looking at a malicious persistence mechanism?

It is not uncommon for new Scheduled Tasks and registry values to be created as part of normal system operation. Lists of “known bad” help tremendously to weed out a majority of Emotet infections, but known lists will only take you so far. Most importantly, automation engines require the right pattern match or signature in order to know when it has encountered a malicious file. If the malicious file changes ever so slightly, it may take several cycles before these engines realize the relationship to previously seen malware before updating the signature.

Huntress SOC analysts have seen this same naming scheme several times over despite having no antivirus alerts for this file. Human intuition and previous experience tell us something suspicious is occurring.

Path to executable: “C:\Users\Matt\AppData\Local\alaskaneutral\alaskaneutral.exe”

This artifact is in AppData\Local just like what is listed in the US-CERT alert, reinforcing this is likely Emotet.

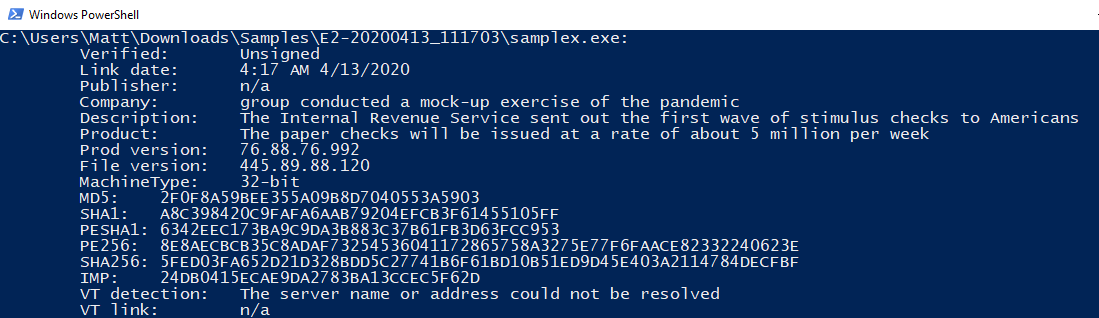

Inspecting the security tab and certificate section of the file will reveal interesting attributes. Even with glaring file abnormalities like these, Emotet slips past automated systems.

Signature information from an Emotet binary

Some security products might overlook a file like this based on how it decides if a file is malicious. For some engines, unknown files will be assigned a score based on various indicators and attributes. If the score doesn’t meet a predefined threshold, then the file will not be marked as malicious enough to take action—something many sophisticated attackers are acutely aware of.

On the other hand, human threat analysts have a trained eye for these types of traces and telltale signs. We’re able to make decisions and educated judgments based on what we’re looking at, without being constrained by a specific set of rules like automation is.

At the end of the day, human attackers are constantly improving their evasion techniques—and as human defenders, we must continually improve our detection capabilities to keep pace with the latest threats.

Special thanks to Cat Contillo and Annie Ballew for their help with this post.

Get insider access to Huntress tradecraft, killer events, and the freshest blog updates.