In the realm of cyber threat intelligence (CTI), technical indicators, commonly known as "indicators of compromise" (IOCs), play a pivotal role. Indicators feature prominently in CTI reporting, are the primary object shared in CTI feeds, and are often used to drive outcomes in security tools such as blocking “bad” IP addresses in a firewall. Despite their central role within the CTI space, indicators have faced criticism for being overly simplistic and retrospective—making their utility as a defensive item questionable at best.

In this blog, we will delve into the concept of indicators in CTI and information security, address criticisms of indicators in network defense, and explore how indicators (even though often dismissed) can form an important and economical defensive measure for small and medium-sized businesses (SMBs).

Defining Indicators

IOCs, specifically and accurately defined, are technical observations related to a known, analyzed compromise. As such, IOCs have unique value and relevance to forensic analysis where an IOC can have significant benefits to rapidly triage and narrow a compromise investigation. Unfortunately, IOCs are often conflated with raw indicators: technical data or observables but not yet linked to a known compromise, or leveraged outside of forensic analysis. Differentiating between IOCs and indicators used for other purposes, such as threat hunting, is therefore necessary to accurately understand the types of data available to us and their use.

Indicators represent a means to communicate technical information on a given threat or activity to others. Absent enrichment or context, such as identifying the timespan of their use or how they relate to adversary operations through frameworks such as MITRE ATT&CK, indicators represent very simple, unitary objects: an IP address, a domain name, a file hash related to a malware sample. Therefore, on their own, indicators have limited utility unless enriched in some fashion. This goes beyond metadata and context but extends into understanding indicators as natural composite objects through further analysis: “breaking apart” specific indicators to understand their creation, use, and application in intrusions.

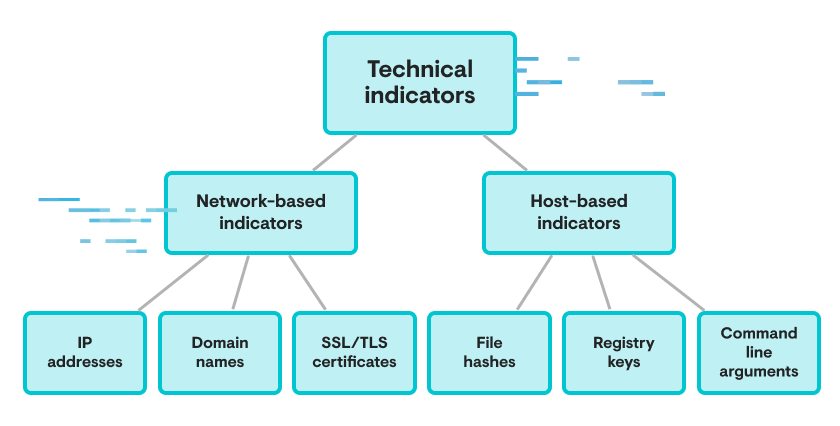

Examples of Technical Indicator Types

In this "composite object" model of technical indicators, subcomponents of technical indicators—such as the hosting provider for a given IP address or specific functions in a malware sample—become items enabling further analysis and behavioral understanding of what an adversary is trying to achieve and how to do so. Such understanding can then be used to drive detection engineering to identify technical signs of these behaviors for threat hunting and pivoting to unearth additional examples.

The process of identification, enrichment, and analysis is a powerful approach to technical indicators that can both unearth adversary tendencies and behavioral observations, while also improving responses to newly observed technical items based on how they relate to previously observed items.

Indicator Shortfalls

The previous model of indicators as composite objects to be enriched is powerful, but rarely represents how technical indicators are used in practice. Instead of leveraging indicators as a means to an end (behavioral understanding), indicators become the end goal itself. This approach, of driving intelligence and defense through “bare” indicators without further analysis and enrichment, is rife with problems.

As early as 2013, David Bianco identified how reliance on indicators can lead to suboptimal outcomes. In his influential “Pyramid of Pain” blog, Bianco emphasizes how “simple” indicators (hash values, IP addresses, domain names) are trivially changed and highly ephemeral in adversary operations. This point has been repeated and refined by many in the decade since. Yet despite seeming consensus on the limitations of indicators for defense and intelligence purposes, they remain the common currency of threat intelligence sharing and reporting.

To some extent, this is due to the conflation between indicator as technical observation and IOC as evidence of a known compromise. The latter retain value for forensic and triage purposes, as described previously, but the former are severely lacking in utility and function absent some additional action, enrichment, or understanding. Despite this now common observation, threat reports continue to contain lists of indicators and related observables, and defenders continue to ingest these items for reasons that are often difficult to articulate.

Defensive Actions Relative to Behaviors vs. Indicators

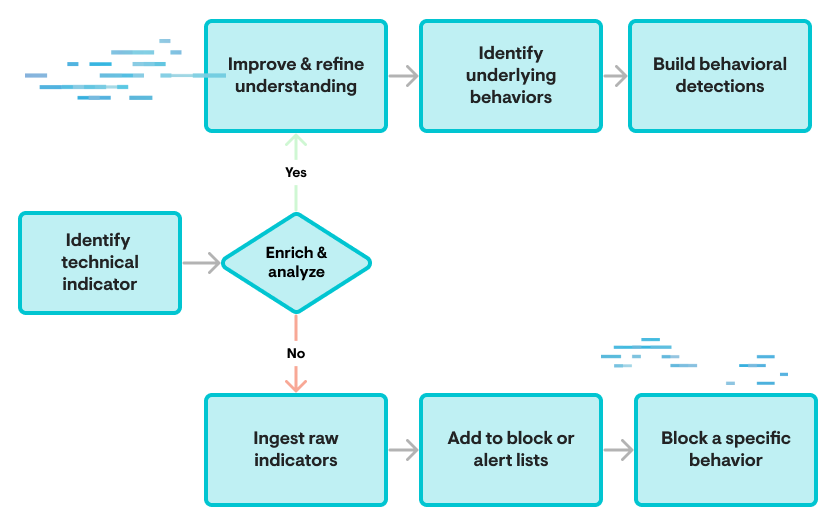

In the above graphic, the top pathway leading to refinement and behavioral understanding leads to more lasting results with wider coverage of multiple examples of given types of actions. Meanwhile, the lower path focuses on very specific instantiations of adversary activity that can be trivially modified or changed, resulting in any action depending on them becoming bypassed and thus irrelevant.

In a vacuum, it appears clear and obvious that viewing indicators as composite objects for further enrichment and analysis, yielding the behaviors underlying the creation of that indicator, is where we as defenders should reside. Yet, we do not exist in a vacuum.

The process described, while extremely beneficial, also comes with significant costs in terms of time, resources, and analysis cycles. Gathering additional data, enriching specific indicators, and presenting this in a timely fashion to analysts or tools for decision making is a nontrivial task, and is well beyond the scope and resources of most organizations.

Rethinking Indicators In Defense Based On Requirements & Capabilities

Behavior-based detection and analysis is ideal, but also prohibitively expensive. On the one hand, stakeholders and customers should hold vendors and service providers to a standard where they embrace behavior-centric analysis and detection, as such entities presumably have the resources (and incentive) to approach this ideal. But for many organizations, particularly within the SMB space, having any security program at all is a significant milestone, let alone one that applies a best-of-class approach to information that many dedicated information security vendors fail to meet.

As with so many things, organizations—and especially SMBs along with their partners such as managed service providers (MSPs)—face tradeoffs. Investing the time and resources necessary for enrichment and behavioral analysis requires forgoing significant investment in other areas that may be far more vital to the organization’s success, such as attack surface management or cyber hygiene (such as vulnerability management). SMBs and MSPs should instead look for “easy wins” that are within scope after higher-value tasks, and look to vendors and security partners to implement these best practices on their behalf.

Within environments controlled by system owners and operators, indicators may be suboptimal but nonetheless retain some value. For example, in investigating multiple ransomware incidents in September and October 2023, Huntress identified malicious RDP sessions to victim networks all originating from the same system hostname: DESKTOP-3ITPFTA. While this host has migrated across multiple hosting IP addresses, identifying RDP (or any) activity from this system name represented a high-confidence observable during the time of this ransomware campaign that entities could use to rapidly identify malicious activity prior to ransomware deployment.

While it's trivial to change this hostname and even easier to shift its public IP, the item above represents an easily ingested, easily actioned touchpoint for malicious activity. Completely relying on this approach for security is likely to produce undesirable results—but layering indicator tracking in a sustainable fashion on top of other security controls can provide a useful backstop. By focusing on high-confidence items shared relatively quickly or as campaigns are active, defenders at otherwise resource-poor organizations can either search backward for recent activity reflecting malicious observables or hunt forward for future actions related to the same indicators.

Notably, friction remains as indicators must come from somewhere. Here, community-based sharing and communication, leveraging industry forums or membership organizations such as information sharing and coordination centers (ISACs), and taking full advantage of disclosures from commercial and government entities when they occur are vital. Balancing “indicator tracking” and identification with day-to-day tasks and IT management will nonetheless remain a challenge at the SMB and MSP level. But through identification of high-quality, high-confidence feeds of information related to the organization’s interest, it's possible to add an effective additional layer of security through indicator tracking, alerting, and blocking to improve overall defensive outcomes.

Conclusion

Technical indicators of malicious cyber activity are surprisingly complex objects once analyzed sufficiently. Yet indicator-focused approaches to security have many shortfalls that well-resourced teams should remain somewhat cautious of. In an ideal world, security practitioners and the organizations they defend would leverage indicators as an intermediary toward behavioral understanding and the follow-on implementation of more complex detection logic. However, the world we live in is far from ideal, and especially for non-enterprise organizations, the pinnacle of CTI and detection engineering achievement at the behavioral level likely remains (directly) out of reach.

Instead, SMBs, MSPs, and related organizations can drive significant value by embracing indicators as a stopgap approach to identifying known malicious activity, layered with additional controls such as attack surface and vulnerability management. When combined with working with a vendor implementing behavioral approaches to detection and monitoring, otherwise disadvantaged organizations can find themselves in a position where they are able to bolster their overall defensive posture while also taking advantage of the investment and expertise of others.