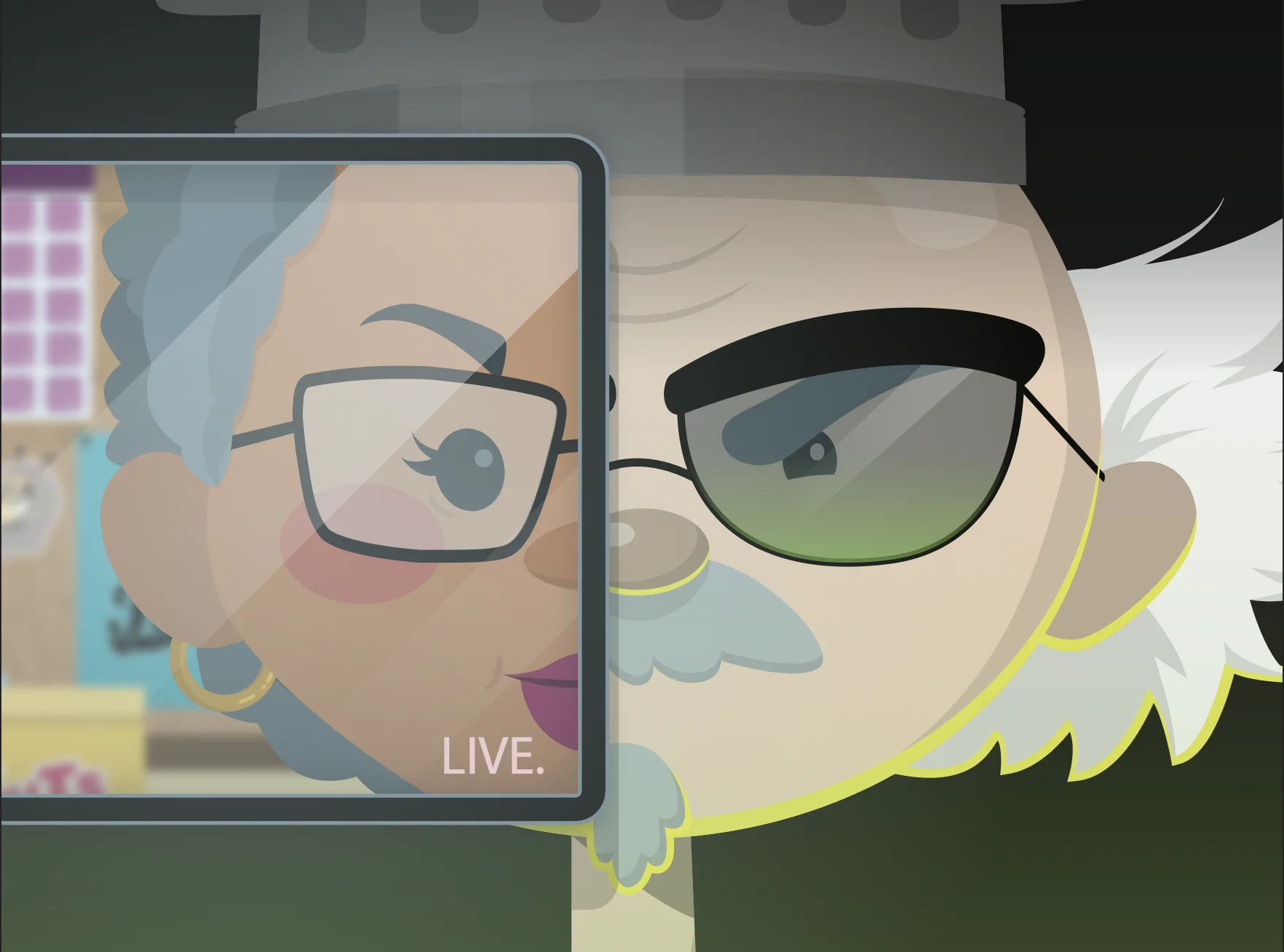

What Is a Deepfake?

Deepfake

In this episode, the mayor of Sludge Springs, cooks up a deepfake to trick Curriculaville’s sanitation worker, Jacob, in hopes of sabotaging their clean town record.

This episode dives into how deepfakes are created, the risks they pose in daily life, and steps you can take to spot and protect against them. Will Jacob see through the scheme, or will AI win the day?

Huntress Managed SAT

Spear Phishing Simulation

Knowing what spear phishing is and actually spotting it in the moment are two different things. See how Huntress SAT closes that gap with a free simulation preview.

FAQs about deepfakes

Deepfakes are AI-generated videos or audio clips that mimic a person’s likeness or voice to create fake yet convincing content. They’re a concern because they can be used for fraud, misinformation, and privacy violations, among other cybersecurity threats.

Cybercriminals use deepfakes for a variety of attacks, such as impersonating executives (CEO fraud) to approve fake wire transfers, spreading false information during critical events, or creating fake content for blackmail.

Look for signs like unnatural facial expressions or movements, audio and visual inconsistencies, mismatched lighting, or syncing issues between lip movement and sound. When content seems questionable, cross-check its credibility with reliable sources.

Yes, emerging AI detection tools analyze video and audio metadata to identify manipulation. Staying updated with these technologies can help you spot deepfake content more reliably.

Organizations should train employees on deepfake awareness, verify unusual requests through secondary channels, use authentication measures like MFA, and adopt endpoint protection tools to defend against threats associated with deepfakes.

Yes, deepfakes can enhance entertainment, provide cultural preservation, and streamline artistic or educational projects. The key is to use this technology ethically and responsibly to avoid harm.

High-value targets like corporations, political figures, and individuals with significant public profiles are at greater risk. However, deepfake scams can target anyone, so staying vigilant is critical for everyone.