Disinformation Campaigns explained

A disinformation campaign is an organized effort to spread false or misleading information with the specific intention of influencing public opinion, undermining trust, or shaping behavior.

Unlike misinformation, which is wrong information shared by accident, disinformation campaigns are always deliberate and coordinated.

Looking for the no-nonsense lowdown on disinformation campaigns—including how they work, who runs them, and why they matter in cybersecurity? You’re in the right place. This glossary guide covers the basics, gives you practical cybersecurity context, and arms you with example-driven insights aligned with certification prep and real-world scenarios.

What exactly is a disinformation campaign?

A disinformation campaign is a planned and systematic effort to manipulate individuals, organizations, or the public by spreading intentional lies or deceptive narratives. These campaigns often mix facts with falsehoods for credibility and are strategically deployed across social media, news sites, meme networks, and private messages to reach as many people as possible.

Key items of a disinformation campaign:

Organized and intentional (never random)

Leverages digital platforms for scale and reach

Blends fact with fiction to build believability

Designed to provoke emotions or manipulate behavior

Often targets political events, social issues, corporations, or public safety

The US Cybersecurity and Infrastructure Security Agency (CISA) calls disinformation a major threat to public trust, democracy, and business resilience.

The lowdown on disinformation campaigns

Disinformation campaigns use a set of repeatable tactics and a playbook that blends social engineering, psychological operations, and technical tricks. Here’s a simplified breakdown:

Anatomy of a Disinformation Campaign

1. Planning and message crafting

Attackers select a target (person, company, event).

Content is designed to evoke strong emotions (anger, fear, confusion).

2. Seeding

False narratives are seeded on multiple platforms (social media, blogs, forums, encrypted messaging apps).

3. Amplification

Bots, fake accounts, and sometimes unwitting humans share/reshare the content to widen its spread.

4. Engagement and manipulation

Target audiences engage, discuss, and further distribute the narrative, giving it the appearance of ‘organic’ popularity.

5. Goal!

Objectives might include manipulating elections, damaging a brand, triggering financial panic, or disrupting societal trust.

One example of a disinformation campaign is the Metro Bank incident in the UK (2019), where a rumor spread via WhatsApp claimed the bank was insolvent. Despite denials, panic ensued, resulting in customer withdrawals and a sharp decline in share price. The campaign's power came from its emotional trigger (fear for personal finances), repetition, and a seed of partial truth.

What's the point of these campaigns?

Disinformation isn’t just a PR problem or a political headache. It’s a serious cybersecurity threat because:

It targets human vulnerabilities. No exploit kit needed when fear, anger, or tribal bias will do the job. Humans, the valued people who drive the success of your business, are often the weakest link in cybersecurity. Security awareness training is needed to help reduce the human attack surface.

It can prime social engineering attacks. Disinformation can soften up targets for spear-phishing or BEC attacks by undermining trust in validated sources.

It erodes trust in essential systems. For example, tarnishing a company’s reputation before a product launch or an election.

It moves fast. False info travels faster and further than the truth, and digital platforms automate the spread.

“Disinformation campaigns blur the lines between reality and fabrication, weakening defenses and muddying attribution. The result? Decision-making suffers, and adversaries capitalize on chaos.” (Source: American Security Project)

Sneaky ways false info gets around

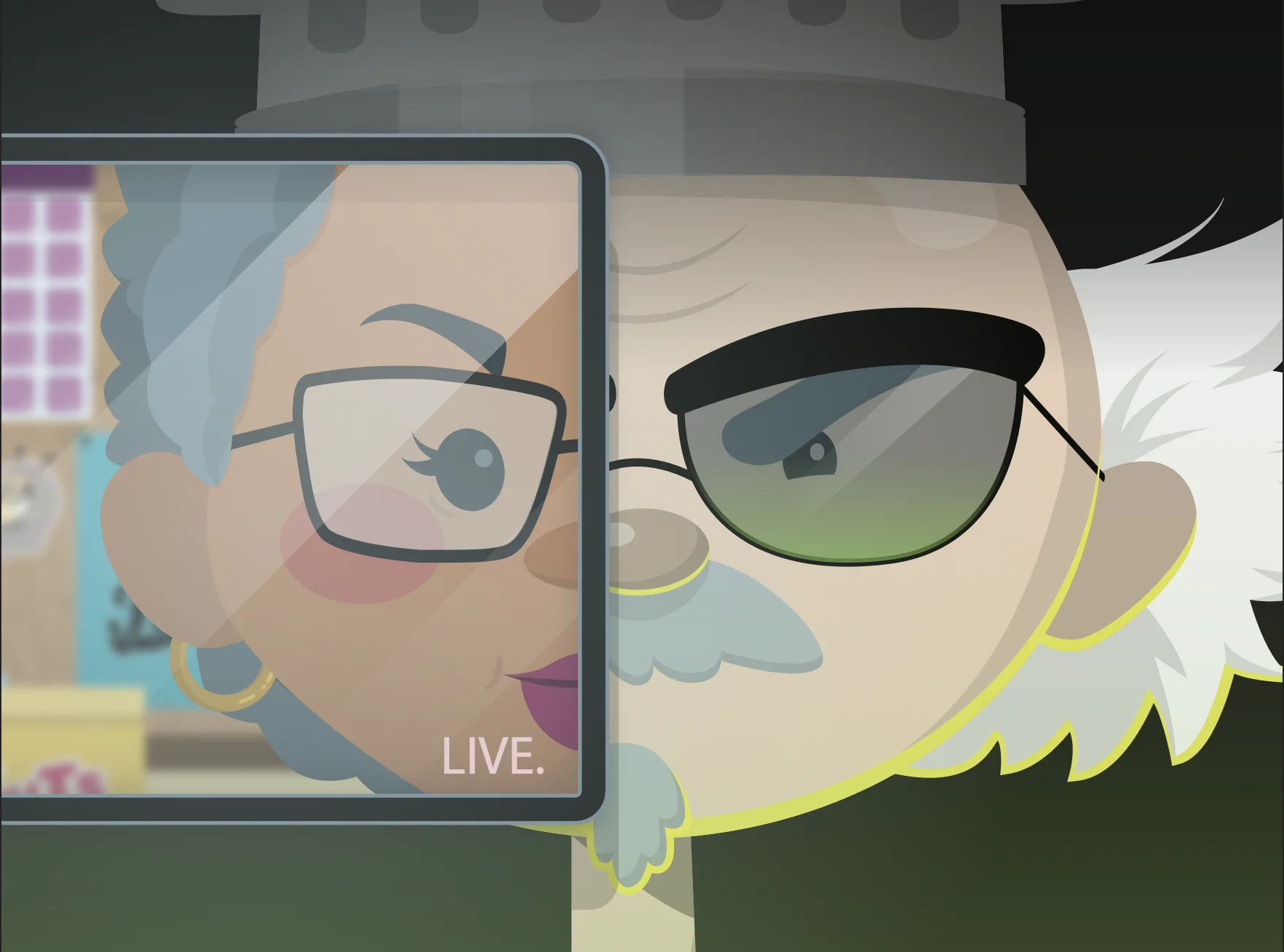

Disinformation doesn’t just show up in obvious ways—it’s often subtle, sneaky, and designed to spread like wildfire. Whether it’s a manipulated image, a misleading headline, or even a satirical cartoon taken out of context (think of Gerald Broflovski’s antics in South Park when he spreads chaos online), false information finds creative pathways to infiltrate discussions and influence opinions.

Here’s a look at some of the clever, covert tactics disinformation campaigns use to thrive in today’s digital landscape:

Fake news sites: Setting up phony news outlets that mimic trusted sources.

Memes and visual manipulation: Shareable graphics or deepfakes for viral spread.

Social media bots & trolls: Automated or real accounts spamming messages at scale.

Algorithmic amplification: Leveraging platform recommendation engines.

Echo chambers: Targeting closed groups where dissenting views are filtered out.

Astroturfing: Orchestrated campaigns made to look like grassroots movements.

Who's behind the lies online?

Nation-state actors aiming to disrupt elections or policy.

Activist groups or lobbyists.

Corporate saboteurs targeting competitors.

Paid “disinformation-as-a-service” outfits found on dark web marketplaces.

Criminal organizations use chaos to profit (e.g., for stock manipulation).

Disinformation vs. misinformation

While disinformation and misinformation might seem similar, they have distinct meanings and implications, especially in the cybersecurity and information space. Disinformation refers to the deliberate creation and spread of false or manipulated information with the intent to deceive, mislead, or manipulate an audience. This is often a calculated effort, used as a weapon to influence public opinion, disrupt operations, or damage reputations. Misinformation, on the other hand, is false information shared without malicious intent. It typically stems from ignorance, misunderstanding, or lack of verification.

Understanding the difference is critical in combating harmful information campaigns. For instance, disinformation campaigns might involve complex coordination by hostile actors, leveraging fake accounts, deepfake technologies, and bot networks to amplify their reach. Meanwhile, misinformation could be as simple as an individual unknowingly sharing an outdated or incorrect article. Addressing both requires vigilance, with strategies like verifying sources, promoting digital literacy, and deploying tools to detect manipulation or falsehoods.

Role of social media in disinformation

Social platforms turbocharge disinformation campaigns by:

Offering massive reach with little oversight.

Amplifying outrage (and thus viral content) via engagement-based algorithms.

Lowering technical and financial barriers to running large-scale campaigns.

The lack of moderation and the creation of “echo chambers” increases susceptibility to false narratives and polarized audiences.

How disinformation campaigns harm organizations

Reputation damage: Trust is hard to win, easy to lose, and very expensive to rebuild.

Financial loss: Impacts may include falling stock prices or lost customers.

Operational Downtime: Disinformation can trigger regulatory scrutiny, employee confusion, or service shutdowns.

Security risks: Distracts defense teams and diverts resources from real cyber threats.

Combating disinformation in cybersecurity

There’s no silver bullet, but you can:

Educate users: Boost media literacy and critical thinking (start with your security awareness training).

Monitor digital chatter: Use threat intelligence to spot narrative trends early.

Build resilience: Develop crisis communication plans and keep internal channels ready to counter false stories.

Unmask and report: Quickly debunk circulating falsehoods and report bots or fake accounts to platforms.

Partner up: Collaborate with others in your sector, law enforcement, and government agencies to pool knowledge and defense.

Stay curious: The best defense is a team that asks questions (before hitting ‘share’).

Key Takeaways

Disinformation campaigns are a growing threat to organizations of all sizes, and understanding how to respond is crucial. By recognizing the risks, acting swiftly, and leveraging the right tools and strategies, businesses can minimize damage and protect their reputations. Here are the key takeaways to keep in mind:

Disinformation campaigns are deliberate, organized attempts to spread false or misleading narratives for specific gain.

Social platforms are the main battlegrounds due to their scale, speed, and emotional engagement algorithms.

Cybersecurity teams are on the front lines—not just defending systems, but also protecting an organization’s truth and reputation.

FAQs about disinformation campaigns

Disinformation is spread on purpose to deceive, while misinformation is shared by accident without malice.

Watch for emotionally charged messages, urgent calls to action, anonymous sources, new accounts, and viral content with little verification.

Through bots, deepfakes, automated posting, and algorithmic recommendations to reach more people, faster.

Absolutely. Any organization with a digital presence can become a target, especially if attackers see value in disruption or reputational harm.

Get your facts straight, communicate quickly and clearly, monitor the spread, involve cybersecurity and PR, and consider seeking legal or law enforcement help if necessary.

Additional Resources

- Read more about What Is a Deepfake?Deepfakes are AI-generated media that can fool anyone. Learn what they are, how to spot one, why threat actors use them, and what to do if you think you're being tricked

- Read more about Click Fraud: Definition, Detection, and Prevention GuideClick Fraud: Definition, Detection, and Prevention GuideLearn what click fraud is, how bots and competitors exploit PPC ads, and discover proven strategies to detect and prevent fraudulent clicks on your campaigns.

- Read more about What is Domain Spoofing? | Cybersecurity 101What is Domain Spoofing? | Cybersecurity 101Learn how domain spoofing works, its impact on cybersecurity, and practical ways to prevent spoofing attacks. Protect your organization from phishing and fraud.

- Read more about What is Brandjacking?What is Brandjacking?Learn how brandjacking bypasses traditional security controls to exploit your brand identity. Discover detection strategies, real-world examples, and defense tactics.

- Read more about What Are TTPs? Cybersecurity Tactics ExplainedWhat Are TTPs? Cybersecurity Tactics ExplainedLearn about TTPs (Tactics, Techniques, and Procedures) in cybersecurity. Understand their role in threat detection and defense strategies.

- Read more about What is a Hoax Attack? How to Spot Fake Security ThreatsWhat is a Hoax Attack? How to Spot Fake Security ThreatsLearn what hoax attacks are, how they spread false security warnings, and discover proven methods to identify and stop these fake threats before they cause damage.

- Read more about What Is PPC Security? How to Protect Your Ad Spend from Click FraudWhat Is PPC Security? How to Protect Your Ad Spend from Click FraudPPC Security protects your ad campaigns from click fraud, bots, and fake traffic. Learn how real-time monitoring and expert analysis stop wasted spend and improve ROI.

- Read more about What Is Bluejacking?What Is Bluejacking?Learn what bluejacking is, how it works, and its risks. Beginner-friendly cybersecurity education from Huntress.

- Read more about What is a SYN Packet and SYN Flood AttackWhat is a SYN Packet and SYN Flood AttackLearn what a SYN is, how SYN packets work, and why SYN flood attacks matter for cybersecurity. Learn to boost network visibility and defense.

Protect What Matters